Deploying n8n on Digital Ocean

This guide shows you how to deploy n8n, a workflow automation tool, on your own VPS. Self-hosting gives you full control over your data, avoids monthly subscription costs, and lets you run unlimited workflows without usage limits.

I'm using Digital Ocean1 for this guide, but these steps work on any VPS provider. You'll need:

- A VPS with Ubuntu 24.04 (minimum 1GB RAM)

- A domain name with DNS access

- Basic familiarity with SSH and command line tools

Create and configure the VPS

Create a droplet with Ubuntu 24.04. Select a plan with at least:

- 1GB RAM

- 25GB Disk

- 1 vCPU

Note the IP address - you'll need it for DNS configuration.

SSH into the server:

ssh root@ipaddress

Update the system:

apt update

apt upgrade -y

Install Docker

Install Docker using the official repository:

# Add Docker's official GPG key

sudo apt-get update

sudo apt-get install ca-certificates curl

sudo install -m 0755 -d /etc/apt/keyrings

sudo curl -fsSL https://download.docker.com/linux/ubuntu/gpg -o /etc/apt/keyrings/docker.asc

sudo chmod a+r /etc/apt/keyrings/docker.asc

# Add the repository to Apt sources

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/ubuntu \

$(. /etc/os-release && echo "${UBUNTU_CODENAME:-$VERSION_CODENAME}") stable" | \

sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

sudo apt-get update

# Install Docker and its components

sudo apt-get install docker-ce docker-ce-cli containerd.io docker-buildx-plugin docker-compose-plugin

Configure DNS

Create an A record at your domain registrar pointing your subdomain (e.g., n8n.yourdomain.com) to your droplet's IP address. If you're using Hover, follow their DNS management guide.

Create Docker Compose configuration

Create a docker-compose.yml file on your server. Start with the Caddy service for handling SSL and reverse proxy:

services:

caddy:

image: caddy:latest

ports:

- "80:80"

- "443:443"

restart: always

volumes:

- ./Caddyfile:/etc/caddy/Caddyfile:ro

- caddy_data:/data

- caddy_config:/config

- ./logs:/var/log/caddy

deploy:

resources:

limits:

cpus: '0.5'

memory: 500M

healthcheck:

test: ["CMD", "caddy", "version"]

interval: 30s

timeout: 10s

retries: 3

logging:

driver: "json-file"

options:

max-size: "10m"

max-file: "3"

volumes:

caddy_data:

caddy_config:

Create a Caddyfile in the same directory, replacing n8n.mydomain.com with your actual domain:

n8n.mydomain.com {

# Enable compression

encode gzip zstd

# Reverse proxy to n8n

reverse_proxy n8n:5678 {

header_up Host {host}

header_up X-Real-IP {remote}

header_up X-Forwarded-For {remote}

header_up X-Forwarded-Proto {scheme}

header_up X-Forwarded-Host {host}

transport http {

keepalive 30s

keepalive_idle_conns 10

}

flush_interval -1

}

# Security headers (relaxed CSP for n8n's dynamic interface)

header {

Strict-Transport-Security "max-age=31536000; includeSubDomains; preload"

X-Content-Type-Options "nosniff"

X-Frame-Options "SAMEORIGIN"

Referrer-Policy "strict-origin-when-cross-origin"

Content-Security-Policy "default-src 'self'; script-src 'self' 'unsafe-inline' 'unsafe-eval'; style-src 'self' 'unsafe-inline'; img-src 'self' data: blob:; connect-src 'self' wss: ws:; frame-src 'self'; worker-src 'self' blob:;"

-Server

}

# Enable logging

log {

output file /var/log/caddy/n8n-access.log {

roll_size 10MB

roll_keep 5

}

format json

}

# Enable TLS with reasonable settings

tls {

protocols tls1.2 tls1.3

}

}

Add n8n to Docker Compose

Add the n8n service under services: in your docker-compose.yml file. Replace n8n.mydomain.com with your domain in the environment variables:

n8n:

image: n8nio/n8n:latest

container_name: n8n

restart: always

environment:

- N8N_HOST=n8n.mydomain.com

- N8N_PORT=5678

- WEBHOOK_URL=https://n8n.mydomain.com/

- GENERIC_TIMEZONE=UTC

ports:

- "5678:5678"

volumes:

- n8n_data:/home/node/.n8n

- /etc/timezone:/etc/timezone:ro

- /etc/localtime:/etc/localtime:ro

deploy:

resources:

limits:

cpus: '1.0'

memory: 1G

healthcheck:

test: ["CMD", "wget", "--no-verbose", "--tries=1", "--spider", "http://localhost:5678/healthz"]

interval: 30s

timeout: 10s

retries: 3

start_period: 60s

logging:

driver: "json-file"

options:

max-size: "10m"

max-file: "3"

Add n8n_data: to the volumes: section in your docker-compose.yml file:

volumes:

caddy_data:

caddy_config:

n8n_data: # new line

Your final docker-compose.yml file will look like this:

services:

caddy:

image: caddy:latest

ports:

- "80:80"

- "443:443"

restart: always

volumes:

- ./Caddyfile:/etc/caddy/Caddyfile:ro

- caddy_data:/data

- caddy_config:/config

- ./logs:/var/log/caddy

deploy:

resources:

limits:

cpus: '0.5'

memory: 500M

healthcheck:

test: ["CMD", "caddy", "version"]

interval: 30s

timeout: 10s

retries: 3

logging:

driver: "json-file"

options:

max-size: "10m"

max-file: "3"

n8n:

image: n8nio/n8n:latest

container_name: n8n

restart: always

environment:

- N8N_HOST=n8n.mydomain.com

- N8N_PORT=5678

- WEBHOOK_URL=https://n8n.mydomain.com/

- GENERIC_TIMEZONE=UTC

ports:

- "5678:5678"

volumes:

- n8n_data:/home/node/.n8n

- /etc/timezone:/etc/timezone:ro

- /etc/localtime:/etc/localtime:ro

deploy:

resources:

limits:

cpus: '1.0'

memory: 1G

healthcheck:

test: ["CMD", "wget", "--no-verbose", "--tries=1", "--spider", "http://localhost:5678/healthz"]

interval: 30s

timeout: 10s

retries: 3

start_period: 60s

logging:

driver: "json-file"

options:

max-size: "10m"

max-file: "3"

volumes:

caddy_data:

caddy_config:

n8n_data:

Start the containers

Run the containers in detached mode:

docker compose up -d

Complete the setup

Navigate to https://n8n.yourdomain.com in your browser. Follow the setup wizard to create your admin account. Once complete, you can start building workflows.

- Referral Link ↩︎

Why We Need to Stop Fighting About AI Tools and Start Teaching Them

In mid-June, Hynek tooted on Mastodon the following toot:

Watching the frustratingly fruitless fights over the USEFULNESS of LLM-based coding helpers, I've come down to 3 points that explain why ppl seem to live in different realities:

Most programmers:

1) Write inconsequential remixes of trivial code that has been written many times before.

2) Lack the taste for good design & suck at code review in general (yours truly included).

3) Lack the judgement to differentiate between 1) & FOSS repos of nontrivial code, leading to PR slop avalanche.

1/3

So, if you're writing novel code & not another CRUD app or API wrapper, all you can see is LLMs fall on their faces.

Same goes for bigger applications if you care about design. Deceivingly, if you lack 2), you won't notice that an architecture is crap b/c it doesn't look worse than your usual stuff.

That means that the era of six figures for CRUD apps is coming to an end, but it also means that Claude Code et al can be very useful for certain tasks. Not every task involves splitting atoms. 2/3

2/3

There's also a bit of a corollary here. Given that LLMs are stochastic parrots, the inputs determine the outputs.

And, without naming names, certain communities are more… rigorous… at software design than others.

It follows that the quality of LLM-generated code will inevitably become a decision factor for choosing frameworks and languages and I'm not sure if I'm ready for that.

3/3

I've been having a lot of success with using Claude Code recently so I've been thinking about this toot a lot lately. Simon Willison talks a lot about the things that he's been able to do because he just asks OpenAI's ChatGPT while walking his dog. He's asking a coding agent to help him with ideas he has in languages with which he may not be familiar. However, he's a good enough programmer that he can spot anti-patterns that are being written by the agent.

For me, it comes down to the helpfulness of these agentic coding tools; they can help me write boiler plate code more quickly. What it's really coming down to, for me, is that when something is trivially easy to implement, like another CRUD app or an API wrapper, those problems are solved. We don't need to keep solving them in ways that don't really help. What we need to do in order to be better programmers is figure out how to solve problems most effectively. And if that's creating a CRUD app or an API wrapper or whatever, then yeah, you're not solving any huge problem there. But if you're looking to solve something in a very unique or novel way, agentic coding tools aren't going to help you as much.

I don't need to know how the internal combustion engine of my car works. I do need to know that when the check engine light comes on, I need to take it to a mechanic. And then that mechanic is going to use some device that lets them know what is wrong with the car and what needs to be done to fix it. This seems very analogous to the coding agents that we're seeing now. We don't have to keep trying to solve those problems with well-known solutions. We can and we should rely on the knowledge that is available to us and use that knowledge to solve these problems quickly. This allows us to focus on trying to solve new problems that no one has ever seen.

This doesn't mean we can skip learning the fundamentals. Like blocking and tackling in football, if you can't handle the basic building blocks of programming, you're not going to succeed with complex projects. That foundational understanding remains essential.

The real value of large language models and coding agents lies in how they can accelerate that learning process. Being able to ask an LLM about how a specific GitHub action works, or why you'd want to use a particular pattern, creates opportunities to understand concepts more quickly. These tools won't solve novel problems for you—that's still the core work of being a software developer. But they can eliminate the repetitive research and boilerplate implementation that used to consume so much of our time, freeing us to focus on the problems that actually require human creativity and problem-solving skills.

How many software developers write in assembly anymore? Some of us maybe, but really what it comes down to is that we don't have to. We've abstracted away a lot of that particular knowledge set to a point where we don't need it anymore. We can write code in higher-level languages to help us get to solutions more quickly. If that's the case, why shouldn't we use LLMs to help us get to solutions even more quickly?

I've noticed a tendency to view LLM-assisted coding as somehow less legitimate, but this misses the opportunity to help developers integrate these tools thoughtfully into their workflow. Instead of questioning the validity of using these tools, we should be focusing on how we can help people learn to use them effectively.

In the same way that we helped people to learn how to use Google, we should help them to use large language models. Back in the early 2000s when Google was just starting to become a thing, knowing how to effectively use it to exclude specific terms, search for exact phrases using quotation marks, that wasn't always known by everybody. But the people who knew how to do that were able to find things more effectively.

I see a parallel here. Instead of dismissing people who use these tools, we should be asking more constructive questions: How do we help them become more effective with LLMs? How do we help them use these tools to actually learn and grow as developers?

Understanding the limitations of large language models is crucial to using them well, but right now we're missing that opportunity by focusing on whether people should use them at all rather than how they can use them better.

We need to take a step back and re-evaluate how we use LLMs and how we encourage others to use them. The goal is getting to a point where we understand that LLMs are one more tool in our developer toolkit, regardless of whether we're working on open-source projects or commercial software. We don't need to avoid these tools. We just need to learn how to use them more effectively, and we need to do this quickly.

Migrating to Raindrop.io

With the announced demise of Pocket by Mozilla I needed to migrate all of my saved articles to 'something else' by the end of the month. I've actually tried to migrate from Pocket a few times over the years. I landed on Instapaper for a while, but it never really clicked for me. I tried a service called Devmarks that Adam G Hill runs, and I really liked it, but for whatever reason I stopped using it. I had also previously tried Raindrop.io ... and I'm not really sure what drove me away from it, but it didn't stick for me at the time.

Since I didn't have a choice about Pocket I did a bit of purusing my options, and finally landed on Raindrop.io again. The process of migration is pretty painless. I just export out the links from Pocket and then import them into Raindrop. No fuss ... no muss. Raindrop even checks for duplicates and allows you to not import them!

So, I imported everything (all 11,500+ articles!) and started to incorporate Raindrop into my workflow. This basically just means saving things to Raindrop instead of pocket, and then checking Raindrop instead of Pocket every week to make sure I'm all caught up on my articles to read.

Over the last weekend I was looking at how all of the imported items in Raindrop were put into the 'archive' collection and decided that I could probably do something about putting them into proper collections.

With the help of Claude Code, I was able to put them into better collections. There were some stragglers and I decided that I could categorize them on my own (there were less than 100).

I started going through these last ones I kept coming across articles for iOS7, or an app that I think I liked in 2015 but isn't on the App store anymore. I came across this article (which I also tooted about on Mastadon) from September 4, 2014 with the title What the Internet of Things Will Look Like in 2025 (Infographic). It's wildly naive, but a fun read nonetheless.

Needless to say it was the only gem in the 100 articles that I went through. I had so many saved articles that aren't 'Evergreen'. I then started looking at some of the articles that had been categorized and came across stuff for Django 1.11, Python 3.8, and other older stuff.

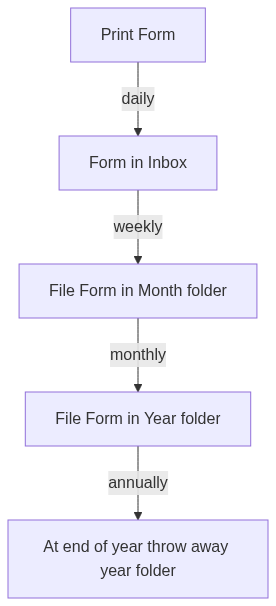

These were great articles when I read them, but I don't know that I need them now. In fact, when I looked at my general workflow for using any read-it-later service, I essentially save it to read later. If it's sitting in my read-it-later service for more than 4 weeks I'll either delete or just archive it.

So really, unless I'm planning on doing something with these articles, I'm not sure that I need to keep them. And that's when it hit me ... I can just delete them. All of them. I don't need to keep them. If they are truly impactful, I can write up something about them in Obsidian. If I really think someone else will get something out of my reaction I can write it up and post it. But, if I'm being honest with myself, this is just digital clutter that isn't "sparking" any joy for me.

So, just like that, I went from having 11,000+ links to having 0. And I have no ragrets.

I'm sure there's some deeper story here about physical things and just letting them go as well, and maybe I'll be able to apply that to my non-digital life, but for now, I'm just going to revel in the fact that I was able to offload this thing and just not ... care? Be sad? I'm not sure what the correct term would be here.

Regardless, it was a good exercise to have gone through, and I'm glad I did.

A New Project at Work

I was added to a work email that was requesting a not-so-small new project that was going to need to be completed. The problem that needed to be solved was a bit squishy, but it had been well thought out, and it had an importance to it that was easy to see.

There was still some workflows and data that needed to be reviewed, but overall it was on a good path to having a real project feel to it.

One question still outstanding is, what platform will this project be implemented on? In our EHR, or on a separate web app?

During my weekly project review meeting with the Web Development team I let them know about the potential for this new project and that it would likely need to take priority over one of our current projects. The start is still a couple of weeks away so we have time to plan for it (as much as we can anyway). We looked at the project board and determined a ranking of the current projects. We decided on the project that would likely get bumped if this new one ends up with the web developers. And just like that we had a contingency plan for how to plan for this project given our current constraints.

Now, this project may never make its way to the web development team, but having that conversation with the manager, and then during our standup today, to let the team know that this might be something that will need to be worked on by them felt right. No surprises in a few weeks. No randomness about what projects we'll be working on ... just a bit of planning to prepare for something that might never come.

Eisenhower said, "Plans are nothing, planning is everything."

The team appreciated being in the loop about a potential project and being able to align expectations moving forward. I felt grateful that this was brought to my attention well before it was submitted as a request. The requester now has a bit more information on who to speak with internally, and it really felt like we were working together to solve a problem in a very professional way.

I wish all projects started like this. It would make life way easier and not so much like this

Updated TIL

While browsing Mastodon the other day I came across a toot that linked to this Cursed Knowledge page. I thought it was a great page, but it occurred to me that it could be helpful to apply the same sort of styling to my TIL Repo.

My TIL (Today I Learned) repository was inspired by Simon Wilison's and is basically a rip off completely inspired by him.

Now, I'm not a front end guy, and while I can do some pretty basic stuff with GitHub Actions, I knew that what I wanted to do far outstripped my current capabilities. Enter Claude!

I started with this prompt

You are an expert in GitHub Actions, and GitHub. Additionally, you are an expert in HTML, CSS, and JavaScript. The solutions provided can not leverage JavaScript frameworks.

I then made this request

I currently have a Today I learned (TIL) repository on GitHub located here

https://github.com/ryancheley/til

I came across the idea of documenting cursed information here

https://immich.app/cursed-knowledge/

I would like to implement my TIL in a timeline the same way the cursed knowledge page was done.

How can I do that?

It output this

name: Build TIL Timeline

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

schedule:

# Rebuild daily at 6 AM UTC

- cron: '0 6 * * *'

permissions:

contents: read

pages: write

id-token: write

jobs:

build-timeline:

runs-on: ubuntu-latest

steps:

- name: Checkout repository

uses: actions/checkout@v4

- name: Setup Node.js

uses: actions/setup-node@v4

with:

node-version: '18'

- name: Generate timeline data

run: |

node << 'EOF'

const fs = require('fs');

const path = require('path');

// Function to extract metadata from markdown files

function extractTilData(filePath, content) {

const relativePath = path.relative('.', filePath);

const fileName = path.basename(filePath, '.md');

// Extract title from first heading or use filename

const titleMatch = content.match(/^#\s+(.+)$/m);

const title = titleMatch ? titleMatch[1] : fileName.replace(/[-_]/g, ' ');

// Extract first paragraph as description

const lines = content.split('\n').filter(line => line.trim());

let description = '';

for (const line of lines) {

if (!line.startsWith('#') && line.trim() && !line.startsWith('```')) {

description = line.trim();

break;

}

}

// Get file stats for date

const stats = fs.statSync(filePath);

const date = stats.mtime; // Use modification time

// Extract category from directory structure

const pathParts = relativePath.split('/');

const category = pathParts.length > 1 ? pathParts[0] : 'General';

return {

title,

description: description || 'No description available',

date: date.toISOString().split('T')[0], // YYYY-MM-DD format

url: `https://github.com/ryancheley/til/blob/main/${relativePath}`,

category,

fileName: relativePath

};

}

// Recursively find all markdown files

function findMarkdownFiles(dir) {

const files = [];

const entries = fs.readdirSync(dir, { withFileTypes: true });

for (const entry of entries) {

const fullPath = path.join(dir, entry.name);

if (entry.isDirectory() && !entry.name.startsWith('.')) {

files.push(...findMarkdownFiles(fullPath));

} else if (entry.isFile() && entry.name.endsWith('.md') && entry.name !== 'README.md') {

files.push(fullPath);

}

}

return files;

}

// Generate timeline data

const markdownFiles = findMarkdownFiles('.');

const tilEntries = [];

for (const filePath of markdownFiles) {

try {

const content = fs.readFileSync(filePath, 'utf8');

const tilData = extractTilData(filePath, content);

tilEntries.push(tilData);

} catch (error) {

console.error(`Error processing ${filePath}:`, error.message);

}

}

// Sort by date (newest first)

tilEntries.sort((a, b) => new Date(b.date) - new Date(a.date));

// Create output directory

if (!fs.existsSync('docs')) {

fs.mkdirSync('docs');

}

// Write timeline data

fs.writeFileSync('docs/timeline-data.json', JSON.stringify(tilEntries, null, 2));

console.log(`Generated timeline with ${tilEntries.length} entries`);

EOF

- name: Generate timeline HTML

run: |

mkdir -p docs

cat > docs/index.html << 'EOF'

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Ryan's Today I Learned Timeline</title>

<style>

* {

margin: 0;

padding: 0;

box-sizing: border-box;

}

body {

font-family: -apple-system, BlinkMacSystemFont, 'Segoe UI', system-ui, sans-serif;

background: linear-gradient(135deg, #667eea 0%, #764ba2 100%);

min-height: 100vh;

color: #333;

}

.container {

max-width: 1200px;

margin: 0 auto;

padding: 2rem;

}

.header {

text-align: center;

margin-bottom: 3rem;

color: white;

}

.header h1 {

font-size: 3rem;

margin-bottom: 1rem;

text-shadow: 2px 2px 4px rgba(0,0,0,0.3);

}

.header p {

font-size: 1.2rem;

opacity: 0.9;

max-width: 600px;

margin: 0 auto;

}

.timeline {

position: relative;

margin-top: 2rem;

}

.timeline::before {

content: '';

position: absolute;

left: 2rem;

top: 0;

bottom: 0;

width: 2px;

background: linear-gradient(to bottom, #4CAF50, #2196F3, #FF9800, #E91E63);

}

.timeline-item {

position: relative;

margin-bottom: 2rem;

margin-left: 4rem;

background: white;

border-radius: 12px;

padding: 1.5rem;

box-shadow: 0 8px 25px rgba(0,0,0,0.1);

transition: transform 0.3s ease, box-shadow 0.3s ease;

}

.timeline-item:hover {

transform: translateY(-5px);

box-shadow: 0 15px 35px rgba(0,0,0,0.15);

}

.timeline-item::before {

content: '';

position: absolute;

left: -3rem;

top: 2rem;

width: 16px;

height: 16px;

background: #4CAF50;

border: 3px solid white;

border-radius: 50%;

box-shadow: 0 0 0 3px rgba(76, 175, 80, 0.3);

}

.timeline-item:nth-child(4n+2)::before { background: #2196F3; box-shadow: 0 0 0 3px rgba(33, 150, 243, 0.3); }

.timeline-item:nth-child(4n+3)::before { background: #FF9800; box-shadow: 0 0 0 3px rgba(255, 152, 0, 0.3); }

.timeline-item:nth-child(4n+4)::before { background: #E91E63; box-shadow: 0 0 0 3px rgba(233, 30, 99, 0.3); }

.timeline-header {

display: flex;

justify-content: space-between;

align-items: flex-start;

margin-bottom: 1rem;

flex-wrap: wrap;

gap: 1rem;

}

.timeline-title {

font-size: 1.4rem;

font-weight: 600;

color: #2c3e50;

text-decoration: none;

flex-grow: 1;

transition: color 0.3s ease;

}

.timeline-title:hover {

color: #3498db;

}

.timeline-meta {

display: flex;

gap: 1rem;

align-items: center;

flex-shrink: 0;

}

.timeline-date {

background: linear-gradient(135deg, #667eea, #764ba2);

color: white;

padding: 0.5rem 1rem;

border-radius: 20px;

font-size: 0.9rem;

font-weight: 500;

}

.timeline-category {

background: #f8f9fa;

color: #6c757d;

padding: 0.4rem 0.8rem;

border-radius: 15px;

font-size: 0.8rem;

font-weight: 500;

border: 1px solid #e9ecef;

}

.timeline-description {

color: #5a6c7d;

line-height: 1.6;

font-size: 1rem;

}

.loading {

text-align: center;

padding: 3rem;

color: white;

font-size: 1.2rem;

}

.error {

background: #f8d7da;

color: #721c24;

padding: 1rem;

border-radius: 8px;

border: 1px solid #f5c6cb;

margin-bottom: 2rem;

}

.stats {

background: rgba(255,255,255,0.95);

border-radius: 12px;

padding: 1.5rem;

margin-bottom: 2rem;

backdrop-filter: blur(10px);

border: 1px solid rgba(255,255,255,0.2);

}

.stats-grid {

display: grid;

grid-template-columns: repeat(auto-fit, minmax(200px, 1fr));

gap: 1rem;

text-align: center;

}

.stat-item {

padding: 1rem;

}

.stat-number {

font-size: 2rem;

font-weight: bold;

color: #667eea;

display: block;

}

.stat-label {

color: #666;

font-size: 0.9rem;

margin-top: 0.5rem;

}

@media (max-width: 768px) {

.container {

padding: 1rem;

}

.header h1 {

font-size: 2rem;

}

.timeline::before {

left: 1rem;

}

.timeline-item {

margin-left: 2rem;

padding: 1rem;

}

.timeline-item::before {

left: -2rem;

}

.timeline-header {

flex-direction: column;

align-items: stretch;

}

.timeline-meta {

justify-content: space-between;

}

}

</style>

</head>

<body>

<div class="container">

<div class="header">

<h1>Today I Learned</h1>

<p>A timeline of discoveries, learnings, and insights from my development journey</p>

</div>

<div class="stats" id="stats" style="display: none;">

<div class="stats-grid">

<div class="stat-item">

<span class="stat-number" id="totalEntries">0</span>

<div class="stat-label">Total Entries</div>

</div>

<div class="stat-item">

<span class="stat-number" id="categoriesCount">0</span>

<div class="stat-label">Categories</div>

</div>

<div class="stat-item">

<span class="stat-number" id="latestEntry">-</span>

<div class="stat-label">Latest Entry</div>

</div>

</div>

</div>

<div class="loading" id="loading">Loading timeline...</div>

<div class="error" id="error" style="display: none;"></div>

<div class="timeline" id="timeline"></div>

</div>

<script>

async function loadTimeline() {

try {

const response = await fetch('timeline-data.json');

if (!response.ok) {

throw new Error('Failed to load timeline data');

}

const entries = await response.json();

displayTimeline(entries);

displayStats(entries);

} catch (error) {

console.error('Error loading timeline:', error);

document.getElementById('loading').style.display = 'none';

document.getElementById('error').style.display = 'block';

document.getElementById('error').textContent = 'Failed to load timeline. Please try again later.';

}

}

function displayStats(entries) {

const categories = [...new Set(entries.map(entry => entry.category))];

const latest = entries.length > 0 ? new Date(entries[0].date).toLocaleDateString() : '-';

document.getElementById('totalEntries').textContent = entries.length;

document.getElementById('categoriesCount').textContent = categories.length;

document.getElementById('latestEntry').textContent = latest;

document.getElementById('stats').style.display = 'block';

}

function displayTimeline(entries) {

const timelineContainer = document.getElementById('timeline');

const loading = document.getElementById('loading');

loading.style.display = 'none';

if (entries.length === 0) {

timelineContainer.innerHTML = '<p style="text-align: center; color: white;">No entries found.</p>';

return;

}

const timelineHTML = entries.map(entry => {

const date = new Date(entry.date).toLocaleDateString('en-US', {

year: 'numeric',

month: 'short',

day: 'numeric'

});

return `

<div class="timeline-item">

<div class="timeline-header">

<a href="${entry.url}" class="timeline-title" target="_blank" rel="noopener noreferrer">

${entry.title}

</a>

<div class="timeline-meta">

<span class="timeline-category">${entry.category}</span>

<span class="timeline-date">${date}</span>

</div>

</div>

<div class="timeline-description">

${entry.description}

</div>

</div>

`;

}).join('');

timelineContainer.innerHTML = timelineHTML;

}

// Load timeline on page load

loadTimeline();

</script>

</body>

</html>

EOF

- name: Setup Pages

uses: actions/configure-pages@v4

- name: Upload artifact

uses: actions/upload-pages-artifact@v3

with:

path: 'docs'

deploy:

environment:

name: github-pages

url: ${{ steps.deployment.outputs.page_url }}

runs-on: ubuntu-latest

needs: build-timeline

if: github.ref == 'refs/heads/main'

steps:

- name: Deploy to GitHub Pages

id: deployment

uses: actions/deploy-pages@v4

I added it to my TIL repo and tested to see how it looked. It was a great start!

I then followed up with

this is a great start! however, it looks like the are a few issues: 1. All of the dates on the timeline are the published date and not the date of the actual learning. There is a SQLite database that stores this kind of meta data 2. The order of the items matches the order they are displayed on the Read Me, which is by category and not by date (see point 1) 3. the colors for each TIL seem to just rotate in order and aren't associated with any specific category of TIL

Claude went to work and generated a new GitHub Action for me

name: Build TIL Timeline

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

schedule:

# Rebuild daily at 6 AM UTC

- cron: '0 6 * * *'

permissions:

contents: read

pages: write

id-token: write

jobs:

build-timeline:

runs-on: ubuntu-latest

steps:

- name: Check out repo

uses: actions/checkout@v4

with:

fetch-depth: 0 # Need full history for git analysis

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: 3.12

- uses: actions/cache@v4

name: Configure pip caching

with:

path: ~/.cache/pip

key: ${{ runner.os }}-pip-${{ hashFiles('**/requirements.txt') }}

restore-keys: |

${{ runner.os }}-pip-

- name: Install Python dependencies

run: |

python -m pip install --upgrade pip

pip install -r requirements.txt

- name: Build database

run: python build_database.py

- name: Generate timeline data from SQLite

run: |

python << 'EOF'

import sqlite3

import json

import os

from pathlib import Path

# Connect to the SQLite database

db_path = Path("tils.db")

if not db_path.exists():

print("Database not found!")

exit(1)

conn = sqlite3.connect(db_path)

conn.row_factory = sqlite3.Row # Enable dict-like access to rows

# Query all TIL entries, ordered by created date (actual learning date)

cursor = conn.execute("""

SELECT

path,

slug,

topic,

title,

url,

body,

created,

created_utc,

updated,

updated_utc

FROM til

ORDER BY created_utc DESC

""")

entries = []

for row in cursor:

# Extract first paragraph as description, excluding headers and code blocks

lines = row['body'].split('\n')

description = ''

for line in lines:

line = line.strip()

if line and not line.startswith('#') and not line.startswith('```') and line != '---':

description = line

break

# Clean up the description

if len(description) > 200:

description = description[:200] + '...'

# Extract date from created_utc (YYYY-MM-DD format)

created_date = row['created_utc'].split('T')[0] if row['created_utc'] else row['created'].split('T')[0]

entry = {

'title': row['title'],

'description': description or 'No description available',

'date': created_date,

'url': row['url'],

'category': row['topic'],

'fileName': row['path'].replace('_', '/'), # Convert back from slug format

'slug': row['slug']

}

entries.append(entry)

conn.close()

# Create output directory

os.makedirs('docs', exist_ok=True)

# Write timeline data

with open('docs/timeline-data.json', 'w') as f:

json.dump(entries, f, indent=2)

print(f"Generated timeline with {len(entries)} entries")

# Generate category statistics for consistent coloring

categories = {}

for entry in entries:

cat = entry['category']

if cat not in categories:

categories[cat] = len([e for e in entries if e['category'] == cat])

# Sort categories by count (most popular first) for consistent color assignment

sorted_categories = sorted(categories.items(), key=lambda x: x[1], reverse=True)

category_colors = {}

# Define a set of distinct colors for categories

color_palette = [

'#4CAF50', # Green

'#2196F3', # Blue

'#FF9800', # Orange

'#E91E63', # Pink

'#9C27B0', # Purple

'#00BCD4', # Cyan

'#FF5722', # Deep Orange

'#795548', # Brown

'#607D8B', # Blue Grey

'#FFC107', # Amber

'#8BC34A', # Light Green

'#3F51B5', # Indigo

'#F44336', # Red

'#009688', # Teal

'#CDDC39', # Lime

]

for i, (category, count) in enumerate(sorted_categories):

category_colors[category] = color_palette[i % len(color_palette)]

# Write category color mapping

with open('docs/category-colors.json', 'w') as f:

json.dump(category_colors, f, indent=2)

print(f"Generated color mapping for {len(category_colors)} categories")

EOF

- name: Generate timeline HTML

run: |

cat > docs/index.html << 'EOF'

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Ryan's Today I Learned Timeline</title>

<meta name="description" content="A chronological timeline of learning discoveries from software development, featuring insights on Python, Django, SQL, and more.">

<style>

* {

margin: 0;

padding: 0;

box-sizing: border-box;

}

body {

font-family: -apple-system, BlinkMacSystemFont, 'Segoe UI', system-ui, sans-serif;

background: linear-gradient(135deg, #667eea 0%, #764ba2 100%);

min-height: 100vh;

color: #333;

}

.container {

max-width: 1200px;

margin: 0 auto;

padding: 2rem;

}

.header {

text-align: center;

margin-bottom: 3rem;

color: white;

}

.header h1 {

font-size: 3rem;

margin-bottom: 1rem;

text-shadow: 2px 2px 4px rgba(0,0,0,0.3);

}

.header p {

font-size: 1.2rem;

opacity: 0.9;

max-width: 600px;

margin: 0 auto;

}

.filters {

background: rgba(255,255,255,0.95);

border-radius: 12px;

padding: 1.5rem;

margin-bottom: 2rem;

backdrop-filter: blur(10px);

border: 1px solid rgba(255,255,255,0.2);

}

.filter-group {

display: flex;

flex-wrap: wrap;

gap: 0.5rem;

align-items: center;

}

.filter-label {

font-weight: 600;

margin-right: 1rem;

color: #666;

}

.category-filter {

padding: 0.4rem 0.8rem;

border-radius: 20px;

border: 2px solid transparent;

background: #f8f9fa;

color: #666;

cursor: pointer;

transition: all 0.3s ease;

font-size: 0.9rem;

user-select: none;

}

.category-filter:hover {

transform: translateY(-2px);

box-shadow: 0 4px 8px rgba(0,0,0,0.1);

}

.category-filter.active {

color: white;

border-color: currentColor;

font-weight: 600;

}

.timeline {

position: relative;

margin-top: 2rem;

}

.timeline::before {

content: '';

position: absolute;

left: 2rem;

top: 0;

bottom: 0;

width: 2px;

background: linear-gradient(to bottom, #4CAF50, #2196F3, #FF9800, #E91E63);

}

.timeline-item {

position: relative;

margin-bottom: 2rem;

margin-left: 4rem;

background: white;

border-radius: 12px;

padding: 1.5rem;

box-shadow: 0 8px 25px rgba(0,0,0,0.1);

transition: all 0.3s ease;

opacity: 1;

}

.timeline-item.hidden {

display: none;

}

.timeline-item:hover {

transform: translateY(-5px);

box-shadow: 0 15px 35px rgba(0,0,0,0.15);

}

.timeline-item::before {

content: '';

position: absolute;

left: -3rem;

top: 2rem;

width: 16px;

height: 16px;

background: var(--category-color, #4CAF50);

border: 3px solid white;

border-radius: 50%;

box-shadow: 0 0 0 3px rgba(76, 175, 80, 0.3);

}

.timeline-header {

display: flex;

justify-content: space-between;

align-items: flex-start;

margin-bottom: 1rem;

flex-wrap: wrap;

gap: 1rem;

}

.timeline-title {

font-size: 1.4rem;

font-weight: 600;

color: #2c3e50;

text-decoration: none;

flex-grow: 1;

transition: color 0.3s ease;

}

.timeline-title:hover {

color: #3498db;

}

.timeline-meta {

display: flex;

gap: 1rem;

align-items: center;

flex-shrink: 0;

}

.timeline-date {

background: linear-gradient(135deg, #667eea, #764ba2);

color: white;

padding: 0.5rem 1rem;

border-radius: 20px;

font-size: 0.9rem;

font-weight: 500;

}

.timeline-category {

background: var(--category-color, #f8f9fa);

color: white;

padding: 0.4rem 0.8rem;

border-radius: 15px;

font-size: 0.8rem;

font-weight: 500;

border: 1px solid rgba(255,255,255,0.2);

}

.timeline-description {

color: #5a6c7d;

line-height: 1.6;

font-size: 1rem;

}

.loading {

text-align: center;

padding: 3rem;

color: white;

font-size: 1.2rem;

}

.error {

background: #f8d7da;

color: #721c24;

padding: 1rem;

border-radius: 8px;

border: 1px solid #f5c6cb;

margin-bottom: 2rem;

}

.stats {

background: rgba(255,255,255,0.95);

border-radius: 12px;

padding: 1.5rem;

margin-bottom: 2rem;

backdrop-filter: blur(10px);

border: 1px solid rgba(255,255,255,0.2);

}

.stats-grid {

display: grid;

grid-template-columns: repeat(auto-fit, minmax(200px, 1fr));

gap: 1rem;

text-align: center;

}

.stat-item {

padding: 1rem;

}

.stat-number {

font-size: 2rem;

font-weight: bold;

color: #667eea;

display: block;

}

.stat-label {

color: #666;

font-size: 0.9rem;

margin-top: 0.5rem;

}

@media (max-width: 768px) {

.container {

padding: 1rem;

}

.header h1 {

font-size: 2rem;

}

.timeline::before {

left: 1rem;

}

.timeline-item {

margin-left: 2rem;

padding: 1rem;

}

.timeline-item::before {

left: -2rem;

}

.timeline-header {

flex-direction: column;

align-items: stretch;

}

.timeline-meta {

justify-content: space-between;

}

.filter-group {

flex-direction: column;

align-items: stretch;

gap: 1rem;

}

.category-filter {

text-align: center;

}

}

</style>

</head>

<body>

<div class="container">

<div class="header">

<h1>Today I Learned</h1>

<p>A chronological timeline of discoveries, learnings, and insights from my development journey</p>

</div>

<div class="stats" id="stats" style="display: none;">

<div class="stats-grid">

<div class="stat-item">

<span class="stat-number" id="totalEntries">0</span>

<div class="stat-label">Total Entries</div>

</div>

<div class="stat-item">

<span class="stat-number" id="categoriesCount">0</span>

<div class="stat-label">Categories</div>

</div>

<div class="stat-item">

<span class="stat-number" id="latestEntry">-</span>

<div class="stat-label">Latest Entry</div>

</div>

<div class="stat-item">

<span class="stat-number" id="filteredCount">0</span>

<div class="stat-label">Showing</div>

</div>

</div>

</div>

<div class="filters" id="filters" style="display: none;">

<div class="filter-group">

<span class="filter-label">Filter by category:</span>

<div id="categoryFilters"></div>

</div>

</div>

<div class="loading" id="loading">Loading timeline...</div>

<div class="error" id="error" style="display: none;"></div>

<div class="timeline" id="timeline"></div>

</div>

<script>

let allEntries = [];

let categoryColors = {};

let activeCategory = null;

async function loadTimeline() {

try {

// Load timeline data and category colors

const [entriesResponse, colorsResponse] = await Promise.all([

fetch('timeline-data.json'),

fetch('category-colors.json')

]);

if (!entriesResponse.ok || !colorsResponse.ok) {

throw new Error('Failed to load timeline data');

}

allEntries = await entriesResponse.json();

categoryColors = await colorsResponse.json();

displayTimeline(allEntries);

displayStats(allEntries);

createCategoryFilters();

} catch (error) {

console.error('Error loading timeline:', error);

document.getElementById('loading').style.display = 'none';

document.getElementById('error').style.display = 'block';

document.getElementById('error').textContent = 'Failed to load timeline. Please try again later.';

}

}

function createCategoryFilters() {

const categories = [...new Set(allEntries.map(entry => entry.category))];

const filtersContainer = document.getElementById('categoryFilters');

// Add "All" filter

const allFilter = document.createElement('span');

allFilter.className = 'category-filter active';

allFilter.textContent = 'All';

allFilter.onclick = () => filterByCategory(null);

filtersContainer.appendChild(allFilter);

// Add category filters

categories.sort().forEach(category => {

const filter = document.createElement('span');

filter.className = 'category-filter';

filter.textContent = category;

filter.style.setProperty('--category-color', categoryColors[category] || '#666');

filter.onclick = () => filterByCategory(category);

filtersContainer.appendChild(filter);

});

document.getElementById('filters').style.display = 'block';

}

function filterByCategory(category) {

activeCategory = category;

// Update filter button states

document.querySelectorAll('.category-filter').forEach(filter => {

filter.classList.remove('active');

if ((category === null && filter.textContent === 'All') ||

filter.textContent === category) {

filter.classList.add('active');

if (category !== null) {

filter.style.background = categoryColors[category];

}

}

});

// Filter timeline items

const filteredEntries = category ?

allEntries.filter(entry => entry.category === category) :

allEntries;

displayTimeline(filteredEntries);

updateFilteredCount(filteredEntries.length);

}

function updateFilteredCount(count) {

document.getElementById('filteredCount').textContent = count;

}

function displayStats(entries) {

const categories = [...new Set(entries.map(entry => entry.category))];

const latest = entries.length > 0 ? new Date(entries[0].date).toLocaleDateString() : '-';

document.getElementById('totalEntries').textContent = entries.length;

document.getElementById('categoriesCount').textContent = categories.length;

document.getElementById('latestEntry').textContent = latest;

document.getElementById('filteredCount').textContent = entries.length;

document.getElementById('stats').style.display = 'block';

}

function displayTimeline(entries) {

const timelineContainer = document.getElementById('timeline');

const loading = document.getElementById('loading');

loading.style.display = 'none';

if (entries.length === 0) {

timelineContainer.innerHTML = '<p style="text-align: center; color: white;">No entries found.</p>';

return;

}

const timelineHTML = entries.map(entry => {

const date = new Date(entry.date).toLocaleDateString('en-US', {

year: 'numeric',

month: 'short',

day: 'numeric'

});

const categoryColor = categoryColors[entry.category] || '#666';

return `

<div class="timeline-item" style="--category-color: ${categoryColor}">

<div class="timeline-header">

<a href="${entry.url}" class="timeline-title" target="_blank" rel="noopener noreferrer">

${entry.title}

</a>

<div class="timeline-meta">

<span class="timeline-category" style="--category-color: ${categoryColor}; background: ${categoryColor};">

${entry.category}

</span>

<span class="timeline-date">${date}</span>

</div>

</div>

<div class="timeline-description">

${entry.description}

</div>

</div>

`;

}).join('');

timelineContainer.innerHTML = timelineHTML;

}

// Load timeline on page load

loadTimeline();

</script>

</body>

</html>

EOF

- name: Setup Pages

uses: actions/configure-pages@v4

- name: Upload artifact

uses: actions/upload-pages-artifact@v3

with:

path: 'docs'

deploy:

environment:

name: github-pages

url: ${{ steps.deployment.outputs.page_url }}

runs-on: ubuntu-latest

needs: build-timeline

if: github.ref == 'refs/heads/main'

steps:

- name: Deploy to GitHub Pages

id: deployment

uses: actions/deploy-pages@v4

Another huge step forward! However, there was one last change that was needed

oh wow! much better! The last issue I'm seeing is that because I have so many categories, the Filter by category needs a way to wrap based on the page width. Right now it's on a single line, but would likely benefit from a css style that will wrap the lines

And I got this

name: Build TIL Timeline

on:

push:

branches: [ main ]

pull_request:

branches: [ main ]

schedule:

# Rebuild daily at 6 AM UTC

- cron: '0 6 * * *'

permissions:

contents: read

pages: write

id-token: write

jobs:

build-timeline:

runs-on: ubuntu-latest

steps:

- name: Check out repo

uses: actions/checkout@v4

with:

fetch-depth: 0 # Need full history for git analysis

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: 3.12

- uses: actions/cache@v4

name: Configure pip caching

with:

path: ~/.cache/pip

key: ${{ runner.os }}-pip-${{ hashFiles('**/requirements.txt') }}

restore-keys: |

${{ runner.os }}-pip-

- name: Install Python dependencies

run: |

python -m pip install --upgrade pip

pip install -r requirements.txt

- name: Build database

run: python build_database.py

- name: Generate timeline data from SQLite

run: |

python << 'EOF'

import sqlite3

import json

import os

from pathlib import Path

# Connect to the SQLite database

db_path = Path("tils.db")

if not db_path.exists():

print("Database not found!")

exit(1)

conn = sqlite3.connect(db_path)

conn.row_factory = sqlite3.Row # Enable dict-like access to rows

# Query all TIL entries, ordered by created date (actual learning date)

cursor = conn.execute("""

SELECT

path,

slug,

topic,

title,

url,

body,

created,

created_utc,

updated,

updated_utc

FROM til

ORDER BY created_utc DESC

""")

entries = []

for row in cursor:

# Extract first paragraph as description, excluding headers and code blocks

lines = row['body'].split('\n')

description = ''

for line in lines:

line = line.strip()

if line and not line.startswith('#') and not line.startswith('```') and line != '---':

description = line

break

# Clean up the description

if len(description) > 200:

description = description[:200] + '...'

# Extract date from created_utc (YYYY-MM-DD format)

created_date = row['created_utc'].split('T')[0] if row['created_utc'] else row['created'].split('T')[0]

entry = {

'title': row['title'],

'description': description or 'No description available',

'date': created_date,

'url': row['url'],

'category': row['topic'],

'fileName': row['path'].replace('_', '/'), # Convert back from slug format

'slug': row['slug']

}

entries.append(entry)

conn.close()

# Create output directory

os.makedirs('docs', exist_ok=True)

# Write timeline data

with open('docs/timeline-data.json', 'w') as f:

json.dump(entries, f, indent=2)

print(f"Generated timeline with {len(entries)} entries")

# Generate category statistics for consistent coloring

categories = {}

for entry in entries:

cat = entry['category']

if cat not in categories:

categories[cat] = len([e for e in entries if e['category'] == cat])

# Sort categories by count (most popular first) for consistent color assignment

sorted_categories = sorted(categories.items(), key=lambda x: x[1], reverse=True)

category_colors = {}

# Define a set of distinct colors for categories

color_palette = [

'#4CAF50', # Green

'#2196F3', # Blue

'#FF9800', # Orange

'#E91E63', # Pink

'#9C27B0', # Purple

'#00BCD4', # Cyan

'#FF5722', # Deep Orange

'#795548', # Brown

'#607D8B', # Blue Grey

'#FFC107', # Amber

'#8BC34A', # Light Green

'#3F51B5', # Indigo

'#F44336', # Red

'#009688', # Teal

'#CDDC39', # Lime

]

for i, (category, count) in enumerate(sorted_categories):

category_colors[category] = color_palette[i % len(color_palette)]

# Write category color mapping

with open('docs/category-colors.json', 'w') as f:

json.dump(category_colors, f, indent=2)

print(f"Generated color mapping for {len(category_colors)} categories")

EOF

- name: Generate timeline HTML

run: |

cat > docs/index.html << 'EOF'

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<meta name="viewport" content="width=device-width, initial-scale=1.0">

<title>Ryan's Today I Learned Timeline</title>

<meta name="description" content="A chronological timeline of learning discoveries from software development, featuring insights on Python, Django, SQL, and more.">

<style>

* {

margin: 0;

padding: 0;

box-sizing: border-box;

}

body {

font-family: -apple-system, BlinkMacSystemFont, 'Segoe UI', system-ui, sans-serif;

background: linear-gradient(135deg, #667eea 0%, #764ba2 100%);

min-height: 100vh;

color: #333;

}

.container {

max-width: 1200px;

margin: 0 auto;

padding: 2rem;

}

.header {

text-align: center;

margin-bottom: 3rem;

color: white;

}

.header h1 {

font-size: 3rem;

margin-bottom: 1rem;

text-shadow: 2px 2px 4px rgba(0,0,0,0.3);

}

.header p {

font-size: 1.2rem;

opacity: 0.9;

max-width: 600px;

margin: 0 auto;

}

.filters {

background: rgba(255,255,255,0.95);

border-radius: 12px;

padding: 1.5rem;

margin-bottom: 2rem;

backdrop-filter: blur(10px);

border: 1px solid rgba(255,255,255,0.2);

}

.filter-group {

display: flex;

flex-direction: column;

gap: 1rem;

}

.filter-label {

font-weight: 600;

color: #666;

margin-bottom: 0.5rem;

}

.category-filters-container {

display: flex;

flex-wrap: wrap;

gap: 0.5rem;

align-items: center;

}

.category-filter {

padding: 0.4rem 0.8rem;

border-radius: 20px;

border: 2px solid transparent;

background: #f8f9fa;

color: #666;

cursor: pointer;

transition: all 0.3s ease;

font-size: 0.9rem;

user-select: none;

}

.category-filter:hover {

transform: translateY(-2px);

box-shadow: 0 4px 8px rgba(0,0,0,0.1);

}

.category-filter.active {

color: white;

border-color: currentColor;

font-weight: 600;

}

.timeline {

position: relative;

margin-top: 2rem;

}

.timeline::before {

content: '';

position: absolute;

left: 2rem;

top: 0;

bottom: 0;

width: 2px;

background: linear-gradient(to bottom, #4CAF50, #2196F3, #FF9800, #E91E63);

}

.timeline-item {

position: relative;

margin-bottom: 2rem;

margin-left: 4rem;

background: white;

border-radius: 12px;

padding: 1.5rem;

box-shadow: 0 8px 25px rgba(0,0,0,0.1);

transition: all 0.3s ease;

opacity: 1;

}

.timeline-item.hidden {

display: none;

}

.timeline-item:hover {

transform: translateY(-5px);

box-shadow: 0 15px 35px rgba(0,0,0,0.15);

}

.timeline-item::before {

content: '';

position: absolute;

left: -3rem;

top: 2rem;

width: 16px;

height: 16px;

background: var(--category-color, #4CAF50);

border: 3px solid white;

border-radius: 50%;

box-shadow: 0 0 0 3px rgba(76, 175, 80, 0.3);

}

.timeline-header {

display: flex;

justify-content: space-between;

align-items: flex-start;

margin-bottom: 1rem;

flex-wrap: wrap;

gap: 1rem;

}

.timeline-title {

font-size: 1.4rem;

font-weight: 600;

color: #2c3e50;

text-decoration: none;

flex-grow: 1;

transition: color 0.3s ease;

}

.timeline-title:hover {

color: #3498db;

}

.timeline-meta {

display: flex;

gap: 1rem;

align-items: center;

flex-shrink: 0;

}

.timeline-date {

background: linear-gradient(135deg, #667eea, #764ba2);

color: white;

padding: 0.5rem 1rem;

border-radius: 20px;

font-size: 0.9rem;

font-weight: 500;

}

.timeline-category {

background: var(--category-color, #f8f9fa);

color: white;

padding: 0.4rem 0.8rem;

border-radius: 15px;

font-size: 0.8rem;

font-weight: 500;

border: 1px solid rgba(255,255,255,0.2);

}

.timeline-description {

color: #5a6c7d;

line-height: 1.6;

font-size: 1rem;

}

.loading {

text-align: center;

padding: 3rem;

color: white;

font-size: 1.2rem;

}

.error {

background: #f8d7da;

color: #721c24;

padding: 1rem;

border-radius: 8px;

border: 1px solid #f5c6cb;

margin-bottom: 2rem;

}

.stats {

background: rgba(255,255,255,0.95);

border-radius: 12px;

padding: 1.5rem;

margin-bottom: 2rem;

backdrop-filter: blur(10px);

border: 1px solid rgba(255,255,255,0.2);

}

.stats-grid {

display: grid;

grid-template-columns: repeat(auto-fit, minmax(200px, 1fr));

gap: 1rem;

text-align: center;

}

.stat-item {

padding: 1rem;

}

.stat-number {

font-size: 2rem;

font-weight: bold;

color: #667eea;

display: block;

}

.stat-label {

color: #666;

font-size: 0.9rem;

margin-top: 0.5rem;

}

@media (max-width: 768px) {

.container {

padding: 1rem;

}

.header h1 {

font-size: 2rem;

}

.timeline::before {

left: 1rem;

}

.timeline-item {

margin-left: 2rem;

padding: 1rem;

}

.timeline-item::before {

left: -2rem;

}

.timeline-header {

flex-direction: column;

align-items: stretch;

}

.timeline-meta {

justify-content: space-between;

}

.filter-group {

gap: 1rem;

}

.category-filters-container {

justify-content: center;

}

}

</style>

</head>

<body>

<div class="container">

<div class="header">

<h1>Today I Learned</h1>

<p>A chronological timeline of discoveries, learnings, and insights from my development journey</p>

</div>

<div class="stats" id="stats" style="display: none;">

<div class="stats-grid">

<div class="stat-item">

<span class="stat-number" id="totalEntries">0</span>

<div class="stat-label">Total Entries</div>

</div>

<div class="stat-item">

<span class="stat-number" id="categoriesCount">0</span>

<div class="stat-label">Categories</div>

</div>

<div class="stat-item">

<span class="stat-number" id="latestEntry">-</span>

<div class="stat-label">Latest Entry</div>

</div>

<div class="stat-item">

<span class="stat-number" id="filteredCount">0</span>

<div class="stat-label">Showing</div>

</div>

</div>

</div>

<div class="filters" id="filters" style="display: none;">

<div class="filter-group">

<span class="filter-label">Filter by category:</span>

<div class="category-filters-container" id="categoryFilters"></div>

</div>

</div>

<div class="loading" id="loading">Loading timeline...</div>

<div class="error" id="error" style="display: none;"></div>

<div class="timeline" id="timeline"></div>

</div>

<script>

let allEntries = [];

let categoryColors = {};

let activeCategory = null;

async function loadTimeline() {

try {

// Load timeline data and category colors

const [entriesResponse, colorsResponse] = await Promise.all([

fetch('timeline-data.json'),

fetch('category-colors.json')

]);

if (!entriesResponse.ok || !colorsResponse.ok) {

throw new Error('Failed to load timeline data');

}

allEntries = await entriesResponse.json();

categoryColors = await colorsResponse.json();

displayTimeline(allEntries);

displayStats(allEntries);

createCategoryFilters();

} catch (error) {

console.error('Error loading timeline:', error);

document.getElementById('loading').style.display = 'none';

document.getElementById('error').style.display = 'block';

document.getElementById('error').textContent = 'Failed to load timeline. Please try again later.';

}

}

function createCategoryFilters() {

const categories = [...new Set(allEntries.map(entry => entry.category))];

const filtersContainer = document.getElementById('categoryFilters');

// Add "All" filter

const allFilter = document.createElement('span');

allFilter.className = 'category-filter active';

allFilter.textContent = 'All';

allFilter.onclick = () => filterByCategory(null);

filtersContainer.appendChild(allFilter);

// Add category filters

categories.sort().forEach(category => {

const filter = document.createElement('span');

filter.className = 'category-filter';

filter.textContent = category;

filter.style.setProperty('--category-color', categoryColors[category] || '#666');

filter.onclick = () => filterByCategory(category);

filtersContainer.appendChild(filter);

});

document.getElementById('filters').style.display = 'block';

}

function filterByCategory(category) {

activeCategory = category;

// Update filter button states

document.querySelectorAll('.category-filter').forEach(filter => {

filter.classList.remove('active');

if ((category === null && filter.textContent === 'All') ||

filter.textContent === category) {

filter.classList.add('active');

if (category !== null) {

filter.style.background = categoryColors[category];

}

}

});

// Filter timeline items

const filteredEntries = category ?

allEntries.filter(entry => entry.category === category) :

allEntries;

displayTimeline(filteredEntries);

updateFilteredCount(filteredEntries.length);

}

function updateFilteredCount(count) {

document.getElementById('filteredCount').textContent = count;

}

function displayStats(entries) {

const categories = [...new Set(entries.map(entry => entry.category))];

const latest = entries.length > 0 ? new Date(entries[0].date).toLocaleDateString() : '-';

document.getElementById('totalEntries').textContent = entries.length;

document.getElementById('categoriesCount').textContent = categories.length;

document.getElementById('latestEntry').textContent = latest;

document.getElementById('filteredCount').textContent = entries.length;

document.getElementById('stats').style.display = 'block';

}

function displayTimeline(entries) {

const timelineContainer = document.getElementById('timeline');

const loading = document.getElementById('loading');

loading.style.display = 'none';

if (entries.length === 0) {

timelineContainer.innerHTML = '<p style="text-align: center; color: white;">No entries found.</p>';

return;

}

const timelineHTML = entries.map(entry => {

const date = new Date(entry.date).toLocaleDateString('en-US', {

year: 'numeric',

month: 'short',

day: 'numeric'

});

const categoryColor = categoryColors[entry.category] || '#666';

return `

<div class="timeline-item" style="--category-color: ${categoryColor}">

<div class="timeline-header">

<a href="${entry.url}" class="timeline-title" target="_blank" rel="noopener noreferrer">

${entry.title}

</a>

<div class="timeline-meta">

<span class="timeline-category" style="--category-color: ${categoryColor}; background: ${categoryColor};">

${entry.category}

</span>

<span class="timeline-date">${date}</span>

</div>

</div>

<div class="timeline-description">

${entry.description}

</div>

</div>

`;

}).join('');

timelineContainer.innerHTML = timelineHTML;

}

// Load timeline on page load

loadTimeline();

</script>

</body>

</html>

EOF

- name: Setup Pages

uses: actions/configure-pages@v4

- name: Upload artifact

uses: actions/upload-pages-artifact@v3

with:

path: 'docs'

deploy:

environment:

name: github-pages

url: ${{ steps.deployment.outputs.page_url }}

runs-on: ubuntu-latest

needs: build-timeline

if: github.ref == 'refs/heads/main'

steps:

- name: Deploy to GitHub Pages

id: deployment

uses: actions/deploy-pages@v4

All told from start to finish, this took about 20 minutes. There are still some potential updates, but for 20 minutes of work I was able to take a 'wild' idea that I would have never been able to do before and had something that I'm actually excited about! And it has the added bonus of encouraging me to write more TILs because I now have this nice looking timeline of my TILs.

Fun with MCPs

Special Thanks to Jeff Triplett who provided an example that really got me started on better understanding of how this all works.

In trying to wrap my head around MCPs over the long Memorial weekend I had a breakthrough. I'm not really sure why this was so hard for me to grok, but now something seems to have clicked.

I am working with Pydantic AI and so I'll be using that as an example, but since MCPs are a standard protocol, these concepts apply broadly across different implementations.

What is Model Context Protocol (MCP)?

Per the Anthropic announcement (from November 2024!!!!)

The Model Context Protocol is an open standard that enables developers to build secure, two-way connections between their data sources and AI-powered tools. The architecture is straightforward: developers can either expose their data through MCP servers or build AI applications (MCP clients) that connect to these servers.

What this means is that there is a standard way to extend models like Claude, or OpenAI to include other information. That information can be files on the file system, data in a database, etc.

(Potential) Real World Example

I work for a Healthcare organization in Southern California. One of the biggest challenges with onboarding new hires (and honestly can be a challenge for people that have been with the organization for a long time) is who to reach out to for support on which specific application.

Typically a user will send an email to one of the support teams, and the email request can get bounced around for a while until it finally lands on the 'right' support desk. There's the potential to have the applications themselves include who to contact, but some applications are vendor supplied and there isn't always a way to do that.

Even if there were, in my experience those are often not noticed by users OR the users will think that the support email is for non-technical issues, like "Please update the phone number for this patient" and not issues like, "The web page isn't returning any results for me, but it is for my coworker."

Enter an MCP with a Local LLM

Let's say you have a service that allows you to search through a file system in a predefined set of directories. This service is run with the following command

npx -y --no-cache @modelcontextprotocol/server-filesystem /path/to/your/files

In Pydantic AI the use of the MCPServerStdio is using this same syntax only it breaks it into two parts

- command

- args

The command is any application in your $PATH like uvx or docker or npx, or you can explicitly define where the executable is by calling out its path, like /Users/ryancheley/.local/share/mise/installs/bun/latest/bin/bunx

The args are the commands you'd pass to your application.

Taking the command from above and breaking it down we can set up our MCP using the following

MCPServerStdio(

"npx",

args=[

"-y",

"--no-cache",

"@modelcontextprotocol/server-filesystem",

"/path/to/your/files",

]

Application of MCP with the Example

Since I work in Healthcare, and I want to be mindful of the protection of patient data, even if that data won't be exposed to this LLM, I'll use ollama to construct my example.

I created a support.csv file that contains the following information

- Common Name of the Application

- URL of the Application

- Support Email

- Support Extension

- Department

I used the following prompt

Review the file

support.csvand help me determine who I contact about questions related to CarePath Analytics.

Here are the contents of the support.csv file

| Name | URL | Support Email | Support Extension | Department |

|---|---|---|---|---|

| MedFlow Solutions | https://medflow.com | support@medflow.com | 1234 | Clinical Systems |

| HealthTech Portal | https://healthtech-portal.org | help@medflow.com | 3456 | Patient Services |

| CarePath Analytics | https://carepath.io | support@medflow.com | 4567 | Data Analytics |

| VitalSign Monitor | https://vitalsign.net | support@medflow.com | 1234 | Clinical Systems |

| Patient Connect Hub | https://patientconnect.com | contact@medflow.com | 3456 | Patient Services |

| EHR Bridge | https://ehrbridge.org | support@medflow.com | 2341 | Integration Services |

| Clinical Workflow Pro | https://clinicalwf.com | support@medflow.com | 1234 | Clinical Systems |

| HealthData Sync | https://healthdata-sync.net | sync@medflow.com | 6789 | Integration Services |

| TeleHealth Connect | https://telehealth-connect.com | help@medflow.com | 3456 | Patient Services |

| MedRecord Central | https://medrecord.central | records@medflow.com | 5678 | Medical Records |

The script is below:

# /// script

# requires-python = ">=3.12"

# dependencies = [

# "pydantic-ai",

# ]

# ///

import asyncio

from pydantic_ai import Agent

from pydantic_ai.mcp import MCPServerStdio

from pydantic_ai.models.openai import OpenAIModel

from pydantic_ai.providers.openai import OpenAIProvider

async def main():

# Configure the Ollama model using OpenAI-compatible API

model = OpenAIModel(

model_name='qwen3:8b', # or whatever model you have installed locally

provider=OpenAIProvider(base_url='http://localhost:11434/v1')

)

# Set up the MCP server to access our support files

support_files_server = MCPServerStdio(

"npx",

args=[

"-y",

"@modelcontextprotocol/server-filesystem",

"/path/to/your/files" # Directory containing support.csv

]

)

# Create the agent with the model and MCP server

agent = Agent(

model=model,

mcp_servers=[support_files_server],

)

# Run the agent with the MCP server

async with agent.run_mcp_servers():

# Get response from Ollama about support contact

result = await agent.run(

"Review the file `support.csv` and help me determine who I contact about questions related to CarePath Analytics?" )

print(result.output)

if __name__ == "__main__":

asyncio.run(main())

As a user, if I ask, who do I contact about questions related to CarePath Analytics the LLM will search through the support.csv file and supply the email contact.

This example shows a command line script, and a Web Interface would probably be better for most users. That would be the next thing I'd try to do here.

Once that was done you could extend it to also include an MCP to write an email on the user's behalf. It could even ask probing questions to help make sure that the email had more context for the support team.

Some support systems have their own ticketing / issue tracking systems and it would be really valuable if this ticket could be written directly to that system. With the MCP this is possible.

We'd need to update the support.csv file with some information about direct writes via an API, and we'd need to secure the crap out of this, but it is possible.

Now, the user can be more confident that their issue will go to the team that it needs to and that their question / issue can be resolved much more quickly.

Firebirds 2024-25 Season

The 2024-25 season for the Coachella Valley Firebirds ended on May 9th with a 2-0 loss to the Abbotsford Canucks. Overall, that series saw the Firebirds score

This isn't surprising given exactly how young the Firebirds were this season, but it was disappointing.

Coach Laxdal talked a lot about how young the team was and how on any given night we would have anywhere from seven to nine rookies that were in the starting lineup. And in a team of 24, that's a pretty big portion of guys out there who are very young.

That being said the disappointment is palpable the this is the earliest that the Firebirds have ever exited the postseason. Granted this is only their third year but we are typically used to seeing hockey for another seven weeks. When put into that perspective, it is really disappointing.

Still, I think there were some really bright spots from this year, including Leyton Roed, Jani Nyman, Nikke Kokko, Ryan Winterton, and Ty Nelson.

At the start of the season, I did indicate to a friend of mine (who also has season tickets) that I had pretty low expectations for the Firebirds and may have even indicated I wasn't sure that they would make the playoffs. The Pacific Division has 10 teams and 7 of them make the playoffs. I may have been a bit too pesimisitic in that analysis.

During the first round the Firebirds swept the Wrangerls 2-0. This is great, but they did manage to blow a 3-0 lead in game 1. The were able to win that game, but it took two plus Overtime periods (it ended a few minutes into the third OT).

Game two of that series did see the Firebirds win 2-0 with Nikke Kokko getting his first professional AHL shutout, which was great . But it's also a bummer that it took until the 74th game of the season for him to get his first shutout of the season. 1

In six games, the Firebirds were 3-3. They scored four goals, two goals, one goal, five goals, one goal, and no goals. They were 0-17 on the power play, and they gave up two, count them two, 3 goal leads.

Needless to say, this was just a hard set of games to watch. The season was hard to watch as a fan. The Firebirds would find ways to lose games. In previous seasons these were the games that they would find some way to win!

There was an article in the Desert Sun that spoke about how proud Coach Laxdal was of the players and how much effort that they gave. And I agree, they did give a lot of effort and he spoke about how young they are.

And again, they are young, and missed their captain Max McCormick for basically two thirds of the season. But they did have some veteran players out there Mitchell Stephens, Brandon Biro, Cale Fleury and Gustav Olofsson. Unfortunately it was just too much to try and overcome.

One of the things that Coach Laxdal also commented on was exactly how much younger next year's team might be. And so while I am again very excited about watching hockey in six months, which is just so long away. I am lowering my expectations for the 25-26 season even lower than they were this year. I'm really hoping we make the playoffs, but won't be surprised if we don't.

And that's going to be okay ... because even bad hockey is still hockey. And I love hockey, and even when they lose, I love watching the Firebirds.

- During the regular season, there was exactly one shutout by Victor Ostman ↩︎

Uptime Kuma and Claude