How does my Django site connect to the internet anyway?

I created a Django site to troll my cousin Barry who is a big San Diego Padres fan. Their Shortstop is a guy called Fernando Tatis Jr. and he’s really good. Like really good. He’s also young, and arrogant, and is everything an old dude like me doesn’t like about the ‘new generation’ of ball players that are changing the way the game is played.

In all honesty though, it’s fun to watch him play (anyone but the Dodgers).

The thing about him though, is that while he’s really good at the plate, he’s less good at playing defense. He currently leads the league in errors. Not just for all shortstops, but for ALL players!

Anyway, back to the point. I made this Django site call Does Tatis Jr Have an Error Today?It is a simple site that only does one thing ... tells you if Tatis Jr has made an error today. If he hasn’t, then it says No, and if he has, then it says Yes.

It’s a dumb site that doesn’t do anything else. At all.

But, what it did do was lead me down a path to answer the question, “How does my site connect to the internet anyway?”

Seems like a simple enough question to answer, and it is, but it wasn’t really what I thought when I started.

How it works

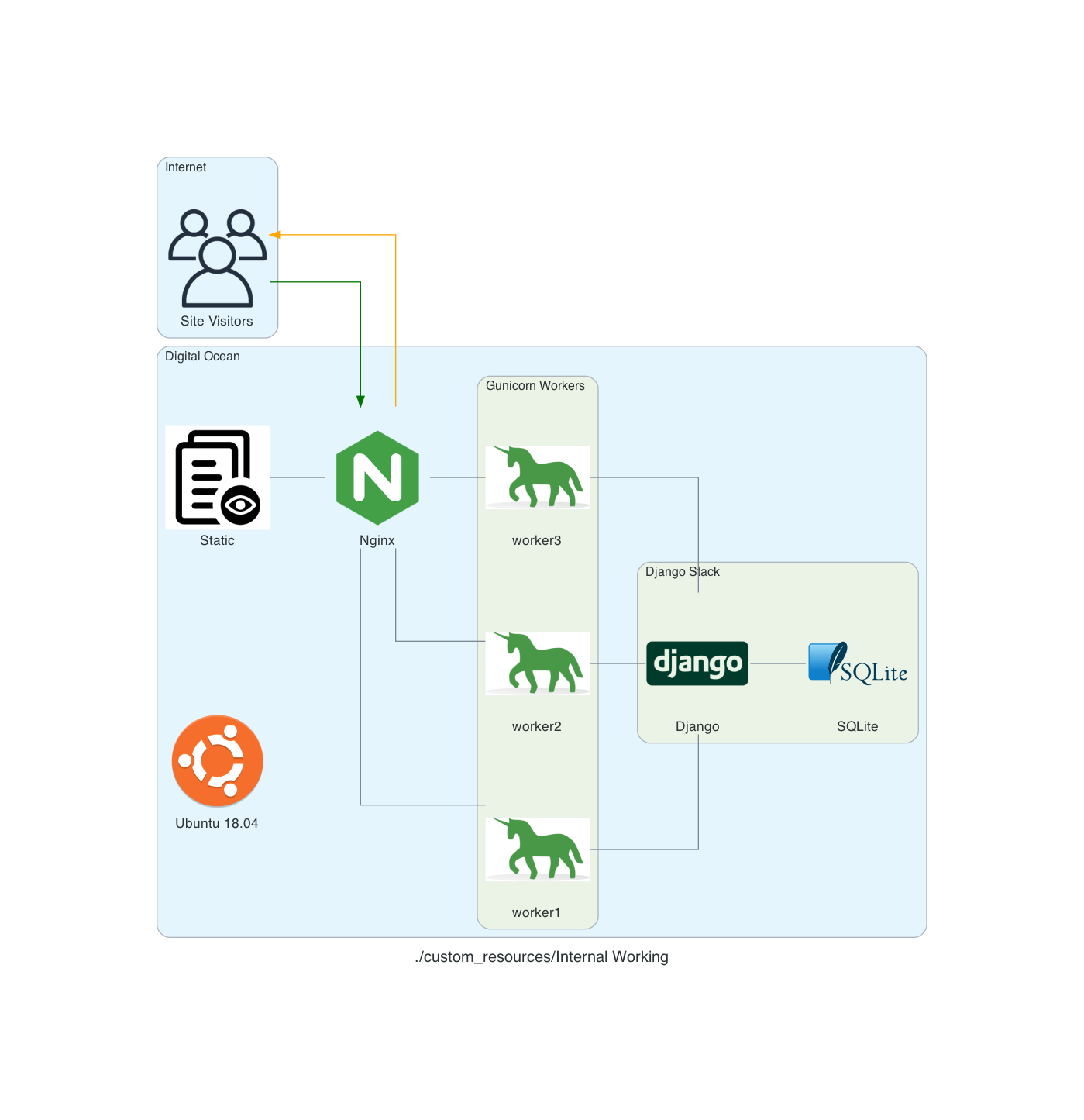

I use a MacBook Pro to work on the code. I then deploy it to a Digital Ocean server using GitHub Actions. But they say, a picture is worth a thousand words, so here's a chart of the workflow:

This shows the development cycle, but that doesn’t answer the question, how does the site connect to the internet!

How is it that when I go to the site, I see anything? I thought I understood it, and when I tried to actually draw it out, turns out I didn't!

After a bit of Googling, I found this and it helped me to create this:

My site runs on an Ubuntu 18.04 server using Nginx as proxy server. Nginx determines if the request is for a static asset (a css file for example) or dynamic one (something served up by the Django App, like answering if Tatis Jr. has an error today).

If the request is static, then Nginx just gets the static data and server it. If it’s dynamic data it hands off the request to Gunicorn which then interacts with the Django App.

So, what actually handles the HTTP request? From the serverfault.com answer above:

[T]he simple answer is Gunicorn. The complete answer is both Nginx and Gunicorn handle the request. Basically, Nginx will receive the request and if it's a dynamic request (generally based on URL patterns) then it will give that request to Gunicorn, which will process it, and then return a response to Nginx which then forwards the response back to the original client.

In my head, I thought that Nginx was ONLY there to handle the static requests (and it is) but I wasn’t clean on how dynamic requests were handled ... but drawing this out really made me stop and ask, “Wait, how DOES that actually work?”

Now I know, and hopefully you do to!

Notes:

These diagrams are generated using the amazing library Diagrams. The code used to generate them is here.

Setting up multiple Django Sites on a Digital Ocean server

If you want to have more than 1 Django site on a single server, you can. It’s not too hard, and using the Digital Ocean tutorial as a starting point, you can get there.

Using this tutorial as a start, we set up so that there are multiple Django sites being served by gunicorn and nginx.

Creating systemd Socket and Service Files for Gunicorn

The first thing to do is to set up 2 Django sites on your server. You’ll want to follow the tutorial referenced above and just repeat for each.

Start by creating and opening two systemd socket file for Gunicorn with sudo privileges:

Site 1

sudo vim /etc/systemd/system/site1.socket

Site 2

sudo vim /etc/systemd/system/site2.socket

The contents of the files will look like this:

[Unit]

Description=siteX socket

[Socket]

ListenStream=/run/siteX.sock

[Install]

WantedBy=sockets.target

Where siteX is the site you want to server from that socket

Next, create and open a systemd service file for Gunicorn with sudo privileges in your text editor. The service filename should match the socket filename with the exception of the extension

sudo vim /etc/systemd/system/siteX.service

The contents of the file will look like this:

[Unit]

Description=gunicorn daemon

Requires=siteX.socket

After=network.target

[Service]

User=sammy

Group=www-data

WorkingDirectory=path/to/directory

ExecStart=path/to/gunicorn/directory

--access-logfile -

--workers 3

--bind unix:/run/gunicorn.sock

myproject.wsgi:application

[Install]

WantedBy=multi-user.target

Again siteX is the socket you want to serve

Follow tutorial for testing Gunicorn

Nginx

server {

listen 80;

server_name server_domain_or_IP;

location = /favicon.ico { access_log off; log_not_found off; }

location /static/ {

root /path/to/project;

}

location / {

include proxy_params;

proxy_pass http://unix:/run/siteX.sock;

}

}

Again siteX is the socket you want to serve

Next, link to enabled sites

Test Nginx

Open firewall

Should now be able to see sites at domain names

Automating the deployment

We got everything set up, and now we want to automate the deployment.

Why would we want to do this you ask? Let’s say that you’ve decided that you need to set up a test version of your site (what some might call UAT) on a new server (at some point I’ll write something up about about multiple Django Sites on the same server and part of this will still apply then). How can you do it?

Well you’ll want to write yourself some scripts!

I have a mix of Python and Shell scripts set up to do this. They are a bit piece meal, but they also allow me to run specific parts of the process without having to try and execute a script with ‘commented’ out pieces.

Python Scripts

create_server.py

destroy_droplet.py

Shell Scripts

copy_for_deploy.sh

create_db.sh

create_server.sh

deploy.sh

deploy_env_variables.sh

install-code.sh

setup-server.sh

setup_nginx.sh

setup_ssl.sh

super.sh

upload-code.sh

The Python script create_server.py looks like this:

# create_server.py

import requests

import os

from collections import namedtuple

from operator import attrgetter

from time import sleep

Server = namedtuple('Server', 'created ip_address name')

doat = os.environ['DIGITAL_OCEAN_ACCESS_TOKEN']

# Create Droplet

headers = {

'Content-Type': 'application/json',

'Authorization': f'Bearer {doat}',

}

data = <data_keys>

print('>>> Creating Server')

requests.post('https://api.digitalocean.com/v2/droplets', headers=headers, data=data)

print('>>> Server Created')

print('>>> Waiting for Server Stand up')

sleep(90)

print('>>> Getting Droplet Data')

params = (

('page', '1'),

('per_page', '10'),

)

get_droplets = requests.get('https://api.digitalocean.com/v2/droplets', headers=headers, params=params)

server_list = []

for d in get_droplets.json()['droplets']:

server_list.append(Server(d['created_at'], d['networks']['v4'][0]['ip_address'], d['name']))

server_list = sorted(server_list, key=attrgetter('created'), reverse=True)

server_ip_address = server_list[0].ip_address

db_name = os.environ['DJANGO_PG_DB_NAME']

db_username = os.environ['DJANGO_PG_USER_NAME']

if server_ip_address != <production_server_id>:

print('>>> Run server setup')

os.system(f'./setup-server.sh {server_ip_address} {db_name} {db_username}')

print(f'>>> Server setup complete. You need to add {server_ip_address} to the ALLOWED_HOSTS section of your settings.py file ')

else:

print('WARNING: Running Server set up will destroy your current production server. Aborting process')

Earlier I said that I liked Digital Ocean because of it’s nice API for interacting with it’s servers (i.e. Droplets). Here we start to see some.

The First part of the script uses my Digital Ocean Token and some input parameters to create a Droplet via the Command Line. The sleep(90) allows the process to complete before I try and get the IP address. Ninety seconds is a bit longer than is needed, but I figure, better safe than sorry … I’m sure that there’s a way to call to DO and ask if the just created droplet has an IP address, but I haven’t figured it out yet.

After we create the droplet AND is has an IP address, we get it to pass to the bash script server-setup.sh.

# server-setup.sh

#!/bin/bash

# Create the server on Digital Ocean

export SERVER=$1

# Take secret key as 2nd argument

if [[ -z "$1" ]]

then

echo "ERROR: No value set for server ip address1"

exit 1

fi

echo -e "\n>>> Setting up $SERVER"

ssh root@$SERVER /bin/bash << EOF

set -e

echo -e "\n>>> Updating apt sources"

apt-get -qq update

echo -e "\n>>> Upgrading apt packages"

apt-get -qq upgrade

echo -e "\n>>> Installing apt packages"

apt-get -qq install python3 python3-pip python3-venv tree supervisor postgresql postgresql-contrib nginx

echo -e "\n>>> Create User to Run Web App"

if getent passwd burningfiddle

then

echo ">>> User already present"

else

adduser --disabled-password --gecos "" burningfiddle

echo -e "\n>>> Add newly created user to www-data"

adduser burningfiddle www-data

fi

echo -e "\n>>> Make directory for code to be deployed to"

if [[ ! -d "/home/burningfiddle/BurningFiddle" ]]

then

mkdir /home/burningfiddle/BurningFiddle

else

echo ">>> Skipping Deploy Folder creation - already present"

fi

echo -e "\n>>> Create VirtualEnv in this directory"

if [[ ! -d "/home/burningfiddle/venv" ]]

then

python3 -m venv /home/burningfiddle/venv

else

echo ">>> Skipping virtualenv creation - already present"

fi

# I don't think i need this anymore

echo ">>> Start and Enable gunicorn"

systemctl start gunicorn.socket

systemctl enable gunicorn.socket

EOF

./setup_nginx.sh $SERVER

./deploy_env_variables.sh $SERVER

./deploy.sh $SERVER

All of that stuff we did before, logging into the server and running commands, we’re now doing via a script. What the above does is attempt to keep the server in an idempotent state (that is to say you can run it as many times as you want and you don’t get weird artifacts … if you’re a math nerd you may have heard idempotent in Linear Algebra to describe the multiplication of a matrix by itself and returning the original matrix … same idea here!)

The one thing that is new here is the part

ssh root@$SERVER /bin/bash << EOF

...

EOF

A block like that says, “take everything in between EOF and run it on the server I just ssh’d into using bash.

At the end we run 3 shell scripts:

setup_nginx.shdeploy_env_variables.shdeploy.sh

Let’s review these scripts

The script setup_nginx.sh copies several files needed for the nginx service:

gunicorn.servicegunicorn.socketsnginx.conf

It then sets up a link between the available-sites and enabled-sites for nginx and finally restarts nginx

# setup_nginx.sh

export SERVER=$1

export sitename=burningfiddle

scp -r ../config/gunicorn.service root@$SERVER:/etc/systemd/system/

scp -r ../config/gunicorn.socket root@$SERVER:/etc/systemd/system/

scp -r ../config/nginx.conf root@$SERVER:/etc/nginx/sites-available/$sitename

ssh root@$SERVER /bin/bash << EOF

echo -e ">>> Set up site to be linked in Nginx"

ln -s /etc/nginx/sites-available/$sitename /etc/nginx/sites-enabled

echo -e ">>> Restart Nginx"

systemctl restart nginx

echo -e ">>> Allow Nginx Full access"

ufw allow 'Nginx Full'

EOF

The script deploy_env_variables.sh copies environment variables. There are packages (and other methods) that help to manage environment variables better than this, and that is one of the enhancements I’ll be looking at.

This script captures the values of various environment variables (one at a time) and then passes them through to the server. It then checks to see if these environment variables exist on the server and will place them in the /etc/environment file

export SERVER=$1

DJANGO_SECRET_KEY=printenv | grep DJANGO_SECRET_KEY

DJANGO_PG_PASSWORD=printenv | grep DJANGO_PG_PASSWORD

DJANGO_PG_USER_NAME=printenv | grep DJANGO_PG_USER_NAME

DJANGO_PG_DB_NAME=printenv | grep DJANGO_PG_DB_NAME

DJANGO_SUPERUSER_PASSWORD=printenv | grep DJANGO_SUPERUSER_PASSWORD

DJANGO_DEBUG=False

ssh root@$SERVER /bin/bash << EOF

if [[ "\$DJANGO_SECRET_KEY" != "$DJANGO_SECRET_KEY" ]]

then

echo "DJANGO_SECRET_KEY=$DJANGO_SECRET_KEY" >> /etc/environment

else

echo ">>> Skipping DJANGO_SECRET_KEY - already present"

fi

if [[ "\$DJANGO_PG_PASSWORD" != "$DJANGO_PG_PASSWORD" ]]

then

echo "DJANGO_PG_PASSWORD=$DJANGO_PG_PASSWORD" >> /etc/environment

else

echo ">>> Skipping DJANGO_PG_PASSWORD - already present"

fi

if [[ "\$DJANGO_PG_USER_NAME" != "$DJANGO_PG_USER_NAME" ]]

then

echo "DJANGO_PG_USER_NAME=$DJANGO_PG_USER_NAME" >> /etc/environment

else

echo ">>> Skipping DJANGO_PG_USER_NAME - already present"

fi

if [[ "\$DJANGO_PG_DB_NAME" != "$DJANGO_PG_DB_NAME" ]]

then

echo "DJANGO_PG_DB_NAME=$DJANGO_PG_DB_NAME" >> /etc/environment

else

echo ">>> Skipping DJANGO_PG_DB_NAME - already present"

fi

if [[ "\$DJANGO_DEBUG" != "$DJANGO_DEBUG" ]]

then

echo "DJANGO_DEBUG=$DJANGO_DEBUG" >> /etc/environment

else

echo ">>> Skipping DJANGO_DEBUG - already present"

fi

EOF

The deploy.sh calls two scripts itself:

# deploy.sh

#!/bin/bash

set -e

# Deploy Django project.

export SERVER=$1

#./scripts/backup-database.sh

./upload-code.sh

./install-code.sh

The final two scripts!

The upload-code.sh script uploads the files to the deploy folder of the server while the install-code.sh script move all of the files to where then need to be on the server and restart any services.

# upload-code.sh

#!/bin/bash

set -e

echo -e "\n>>> Copying Django project files to server."

if [[ -z "$SERVER" ]]

then

echo "ERROR: No value set for SERVER."

exit 1

fi

echo -e "\n>>> Preparing scripts locally."

rm -rf ../../deploy/*

rsync -rv --exclude 'htmlcov' --exclude 'venv' --exclude '*__pycache__*' --exclude '*staticfiles*' --exclude '*.pyc' ../../BurningFiddle/* ../../deploy

echo -e "\n>>> Copying files to the server."

ssh root@$SERVER "rm -rf /root/deploy/"

scp -r ../../deploy root@$SERVER:/root/

echo -e "\n>>> Finished copying Django project files to server."

And finally,

# install-code.sh

#!/bin/bash

# Install Django app on server.

set -e

echo -e "\n>>> Installing Django project on server."

if [[ -z "$SERVER" ]]

then

echo "ERROR: No value set for SERVER."

exit 1

fi

echo $SERVER

ssh root@$SERVER /bin/bash << EOF

set -e

echo -e "\n>>> Activate the Virtual Environment"

source /home/burningfiddle/venv/bin/activate

cd /home/burningfiddle/

echo -e "\n>>> Deleting old files"

rm -rf /home/burningfiddle/BurningFiddle

echo -e "\n>>> Copying new files"

cp -r /root/deploy/ /home/burningfiddle/BurningFiddle

echo -e "\n>>> Installing Python packages"

pip install -r /home/burningfiddle/BurningFiddle/requirements.txt

echo -e "\n>>> Running Django migrations"

python /home/burningfiddle/BurningFiddle/manage.py migrate

echo -e "\n>>> Creating Superuser"

python /home/burningfiddle/BurningFiddle/manage.py createsuperuser --noinput --username bfadmin --email rcheley@gmail.com || true

echo -e "\n>>> Load Initial Data"

python /home/burningfiddle/BurningFiddle/manage.py loaddata /home/burningfiddle/BurningFiddle/fixtures/pages.json

echo -e "\n>>> Collecting static files"

python /home/burningfiddle/BurningFiddle/manage.py collectstatic

echo -e "\n>>> Reloading Gunicorn"

systemctl daemon-reload

systemctl restart gunicorn

EOF

echo -e "\n>>> Finished installing Django project on server."

Preparing the code for deployment to Digital Ocean

OK, we’ve got our server ready for our Django App. We set up Gunicorn and Nginx. We created the user which will run our app and set up all of the folders that will be needed.

Now, we work on deploying the code!

Deploying the Code

There are 3 parts for deploying our code:

- Collect Locally

- Copy to Server

- Place in correct directory

Why don’t we just copy to the spot on the server we want o finally be in? Because we’ll need to restart Nginx once we’re fully deployed and it’s easier to have that done in 2 steps than in 1.

Collect the Code Locally

My project is structured such that there is a deploy folder which is on the Same Level as my Django Project Folder. That is to say

We want to clear out any old code. To do this we run from the same level that the Django Project Folder is in

rm -rf deploy/*

This will remove ALL of the files and folders that were present. Next, we want to copy the data from the yoursite folder to the deploy folder:

rsync -rv --exclude 'htmlcov' --exclude 'venv' --exclude '*__pycache__*' --exclude '*staticfiles*' --exclude '*.pyc' yoursite/* deploy

Again, running this form the same folder. I’m using rsync here as it has a really good API for allowing me to exclude items (I’m sure the above could be done better with a mix of Regular Expressions, but this gets the jobs done)

Copy to the Server

We have the files collected, now we need to copy them to the server.

This is done in two steps. Again, we want to remove ALL of the files in the deploy folder on the server (see rationale from above)

ssh root@$SERVER "rm -rf /root/deploy/"

Next, we use scp to secure copy the files to the server

scp -r deploy root@$SERVER:/root/

Our files are now on the server!

Installing the Code

We have several steps to get through in order to install the code. They are:

- Activate the Virtual Environment

- Deleting old files

- Copying new files

- Installing Python packages

- Running Django migrations

- Collecting static files

- Reloading Gunicorn

Before we can do any of this we’ll need to ssh into our server. Once that’s done, we can proceed with the steps below.

Above we created our virtual environment in a folder called venv located in /home/yoursite/. We’ll want to activate it now (1)

source /home/yoursite/venv/bin/activate

Next, we change directory into the yoursite home directory

cd /home/yoursite/

Now, we delete the old files from the last install (2):

rm -rf /home/yoursite/yoursite

Copy our new files (3)

cp -r /root/deploy/ /home/yoursite/yoursite

Install our Python packages (4)

pip install -r /home/yoursite/yoursite/requirements.txt

Run any migrations (5)

python /home/yoursite/yoursite/manage.py migrate

Collect Static Files (6)

python /home/yoursite/yoursite/manage.py collectstatic

Finally, reload Gunicorn

systemctl daemon-reload

systemctl restart gunicorn

When we visit our domain we should see our Django Site fn

Getting your Domain to point to Digital Ocean Your Server

I use Hover for my domain purchases and management. Why? Because they have a clean, easy to use, not-slimy interface, and because I listed to enough Tech Podcasts that I’ve drank the Kool-Aid.

When I was trying to get my Hover Domain to point to my Digital Ocean server it seemed much harder to me than it needed to be. Specifically, I couldn’t find any guide on doing it! Many of the tutorials I did find were basically like, it’s all the same. We’ll show you with GoDaddy and then you can figure it out.

Yes, I can figure it out, but it wasn’t as easy as it could have been. That’s why I’m writing this up.

Digital Ocean

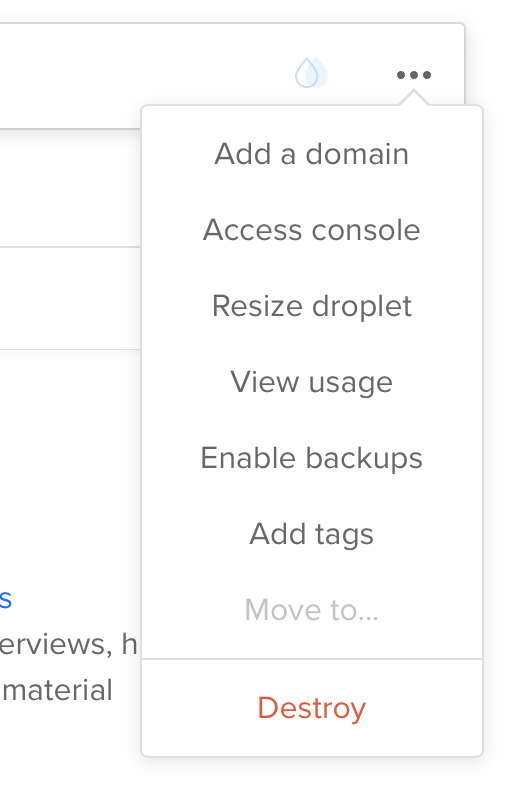

From Droplet screen click ‘Add a Domain’

<figure class="aligncenter">

</p>

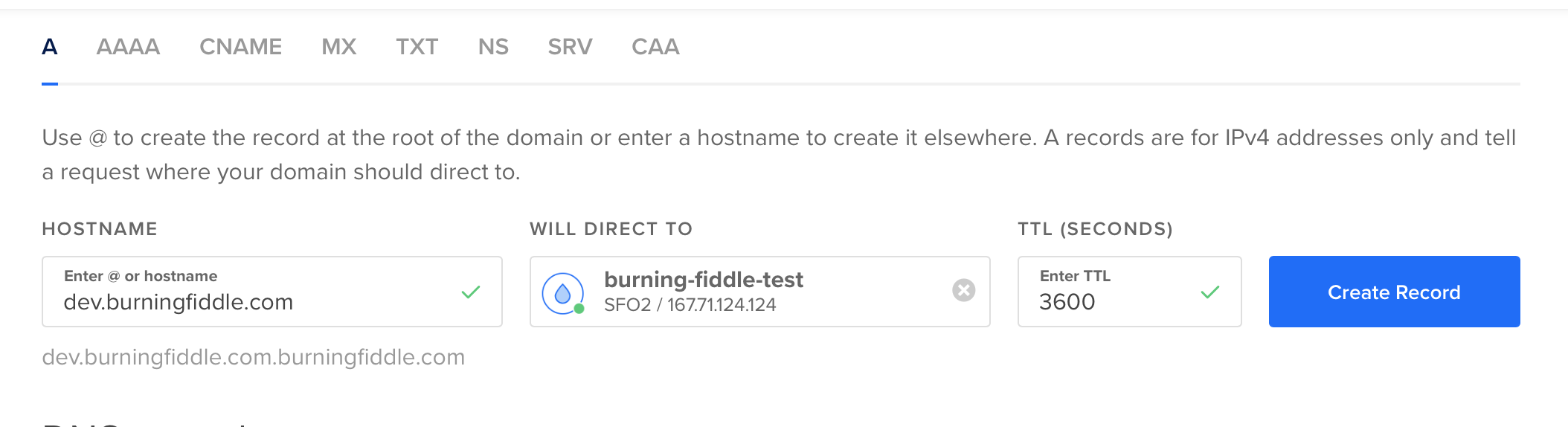

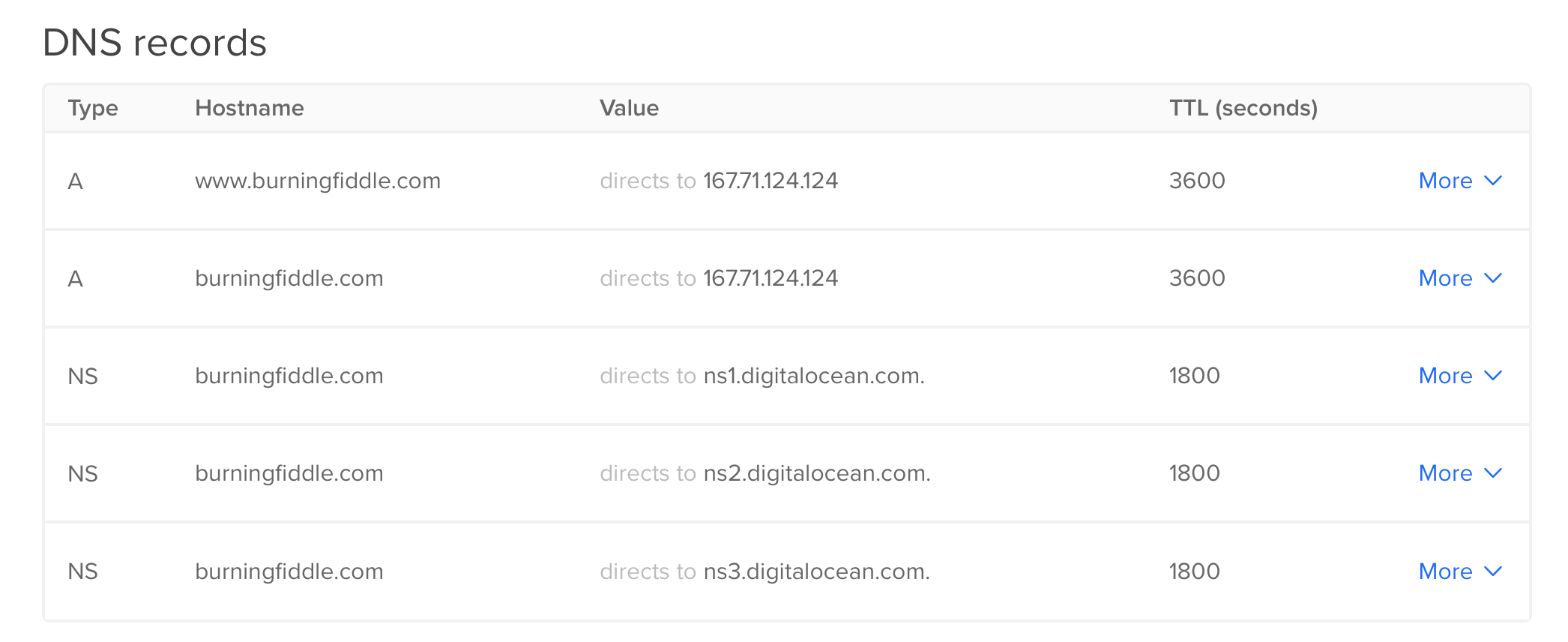

Add 2 ‘A’ records (one for www and one without the www)

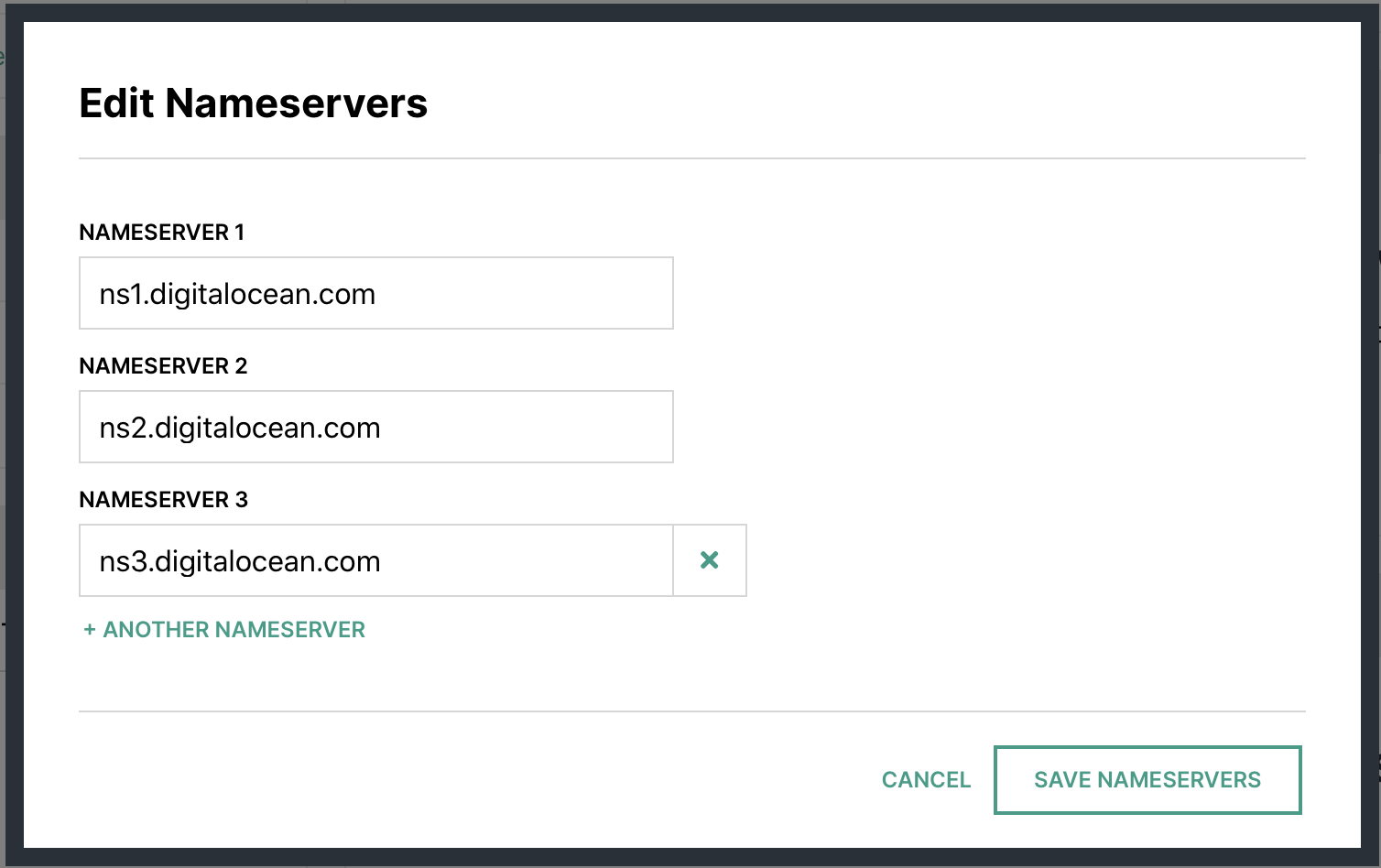

Make note of the name servers

Hover

In your account at Hover.com change your Name Servers to Point to Digital Ocean ones from above.

Wait

DNS … does anyone really know how it works?1 I just know that sometimes when I make a change it’s out there almost immediately for me, and sometimes it takes hours or days.

At this point, you’re just going to potentially need to wait. Why? Because DNS that’s why. Ugh!

Setting up directory structure

While we’re waiting for the DNS to propagate, now would be a good time to set up some file structures for when we push our code to the server.

For my code deploy I’ll be using a user called burningfiddle. We have to do two things here, create the user, and add them to the www-data user group on our Linux server.

We can run these commands to take care of that:

adduser --disabled-password --gecos "" yoursite

The first line will add the user with no password and disable them to be able to log in until a password has been set. Since this user will NEVER log into the server, we’re done with the user creation piece!

Next, add the user to the proper group

adduser yoursite www-data

Now we have a user and they’ve been added to the group we need them to be added. In creating the user, we also created a directory for them in the home directory called yoursite. You should now be able to run this command without error

ls /home/yoursite/

If that returns an error indicating no such directory, then you may not have created the user properly.

Now we’re going to make a directory for our code to be run from.

mkdir /home/yoursite/yoursite

To run our Django app we’ll be using virtualenv. We can create our virtualenv directory by running this command

python3 -m venv /home/yoursite/venv

Configuring Gunicorn

There are two files needed for Gunicorn to run:

- gunicorn.socket

- gunicorn.service

For our setup, this is what they look like:

# gunicorn.socket

[Unit]

Description=gunicorn socket

[Socket]

ListenStream=/run/gunicorn.sock

[Install]

WantedBy=sockets.target

# gunicorn.service

[Unit]

Description=gunicorn daemon

Requires=gunicorn.socket

After=network.target

[Service]

User=yoursite

EnvironmentFile=/etc/environment

Group=www-data

WorkingDirectory=/home/yoursite/yoursite

ExecStart=/home/yoursite/venv/bin/gunicorn

--access-logfile -

--workers 3

--bind unix:/run/gunicorn.sock

yoursite.wsgi:application

[Install]

WantedBy=multi-user.target

For more on the details of the sections in both gunicorn.service and gunicorn.socket see this article.

Environment Variables

The only environment variables we have to worry about here (since we’re using SQLite) are the DJANGO_SECRET_KEY and DJANGO_DEBUG

We’ll want to edit /etc/environment with our favorite editor (I’m partial to vim but use whatever you like

vim /etc/environment

In this file you’ll add your DJANGO_SECRET_KEY and DJANGO_DEBUG. The file will look something like this once you’re done:

PATH="/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin:/usr/games:/usr/local/games"

DJANGO_SECRET_KEY=my_super_secret_key_goes_here

DJANGO_DEBUG=False

Setting up Nginx

Now we need to create our .conf file for Nginx. The file needs to be placed in /etc/nginx/sites-available/$sitename where $sitename is the name of your site. fn

The final file will look (something) like this fn

server {

listen 80;

server_name www.yoursite.com yoursite.com;

location = /favicon.ico { access_log off; log_not_found off; }

location /static/ {

root /home/yoursite/yoursite/;

}

location / {

include proxy_params;

proxy_pass http://unix:/run/gunicorn.sock;

}

}

The .conf file above tells Nginx to listen for requests to either www.buringfiddle.com or buringfiddle.com and then route them to the location /home/yoursite/yoursite/ which is where our files are located for our Django project.

With that in place all that’s left to do is to make it enabled by running replacing $sitename with your file

ln -s /etc/nginx/sites-available/$sitename /etc/nginx/sites-enabled

You’ll want to run

nginx -t

to make sure there aren’t any errors. If no errors occur you’ll need to restart Nginx

systemctl restart nginx

The last thing to do is to allow full access to Nginx. You do this by running

ufw allow 'Nginx Full'

- Probably just [Julia Evans](https://jvns.ca/blog/how-updating-dns-works/ ↩︎

Logging in a Django App

Per the Django Documentation you can set up

A list of all the people who get code error notifications. When DEBUG=False and AdminEmailHandler is configured in LOGGING (done by default), Django emails these people the details of exceptions raised in the request/response cycle.

In order to set this up you need to include in your settings.py file something like:

ADMINS = [

('John', 'john@example.com'),

('Mary', 'mary@example.com')

]

The difficulties I always ran into were:

- How to set up the AdminEmailHandler

- How to set up a way to actually email from the Django Server

Again, per the Django Documentation:

Django provides one log handler in addition to those provided by the Python logging module

Reading through the documentation didn’t really help me all that much. The docs show the following example:

'handlers': {

'mail_admins': {

'level': 'ERROR',

'class': 'django.utils.log.AdminEmailHandler',

'include_html': True,

}

},

That’s great, but there’s not a direct link (that I could find) to the example of how to configure the logging in that section. It is instead at the VERY bottom of the documentation page in the Contents section in the Configured logging > Examples section ... and you really need to know that you have to look for it!

The important thing to do is to include the above in the appropriate LOGGING setting, like this:

LOGGING = {

'version': 1,

'disable_existing_loggers': False,

'handlers': {

'mail_admins': {

'level': 'ERROR',

'class': 'django.utils.log.AdminEmailHandler',

'include_html': True,

}

},

},

}

Sending an email with Logging information

We’ve got the logging and it will be sent via email, but there’s no way for the email to get sent out yet!

In order to accomplish this I use SendGrid. No real reason other than that’s what I’ve used in the past.

There are great tutorials online for how to get SendGrid integrated with Django, so I won’t rehash that here. I’ll just drop my the settings I used in my settings.py

SENDGRID_API_KEY = env("SENDGRID_API_KEY")

EMAIL_HOST = "smtp.sendgrid.net"

EMAIL_HOST_USER = "apikey"

EMAIL_HOST_PASSWORD = SENDGRID_API_KEY

EMAIL_PORT = 587

EMAIL_USE_TLS = True

One final thing I needed to do was to update the email address that was being used to send the email. By default it uses root@localhost which isn’t ideal.

You can override this by setting

SERVER_EMAIL = myemail@mydomain.tld

With those three settings, everything should just work.

Making it easy to ssh into a remote server

Logging into a remote server is a drag. Needing to remember the password (or get it from 1Password); needing to remember the IP address of the remote server. Ugh.

It’d be so much easier if I could just

ssh username@servername

and get into the server.

And it turns out, you can. You just need to do two simple things.

Simple thing the first: Update the hosts file on your local computer to map the IP address to a memorable name.

The hosts file is located at /etc/hosts (at least on *nix based systems).

Go to the hosts file in your favorite editor … my current favorite editor for simple stuff like this is vim.

Once there, add the IP address you don’t want to have to remember, and then a name that you will remember. For example:

67.176.220.115 easytoremembername

One thing to keep in mind, you’ll already have some entries in this file. Don’t mess with them. Leave them there. Seriously … it’ll be better for everyone if you do.

Simple thing the second: Generate a public-private key and share the public key with the remote server

From the terminal run the command ssh-keygen -t rsa. This will generate a public and private key. You will be asked for a location to save the keys to. The default (on MacOS) is /Users/username/.ssh/id_rsa. I tend to accept the default (no reason not to) and leave the passphrase blank (this means you won’t have to enter a password which is what we’re looking for in the first place!)

Next, we copy the public key to the host(s) you want to access using the command

ssh-copy-id <username>@<hostname>

for example:

ssh-copy-id pi@rpicamera

The first time you do this you will get a message asking you if you’re sure you want to do this. Type in yes and you’re good to go.

One thing to note, doing this updates the file known_hosts. If, for some reason, the server you are ssh-ing to needs to be rebuilt (i.e. you have to keep destroying your Digital Ocean Ubuntu server because you can’t get the static files to be served properly for your Django project) then you need to go to the known_hosts file and remove the entry for that known host.

When you do that you’ll be asked about the identity of the server (again). Just say yes and you’re good to go.

If you forget that step then when you try to ssh into the server you get a nasty looking error message saying that the server identities don’t match and you can’t proceed.